# Rasa Documentation

> Documentation for building conversational AI with Rasa Pro, Rasa Studio, and the Rasa platform.

## API Specifications

### Rasa - Server Endpoints (1.0.0)

[Skip to main content](#__docusaurus_skipToContent_fallback)

Build your first agent in just a few minutes with [Rasa Copilot](https://hello.rasa.com/?utm_source=docs\&utm_medium=referral\&utm_campaign=docs_cta).

[](https://rasa.com/docs/docs/)

[Home](https://rasa.com/docs/docs/)[Rasa](https://rasa.com/docs/docs/pro/intro/)[Studio](https://rasa.com/docs/docs/studio/intro/)[Reference](https://rasa.com/docs/docs/reference/overview/)

[Blog](https://blog.rasa.com/)

[Community](#)

* [Community Hub](https://rasa.com/community/join/)

* [Forum](https://forum.rasa.com)

* [How to Contribute](https://rasa.com/community/contribute/)

* [Community Showcase](https://rasa.com/showcase/)

Search...

* Server Information

* getHealth endpoint of Rasa Server

* getInformation about your Rasa Pro License

* getVersion of Rasa

* getStatus of the Rasa server

* Tracker

* getRetrieve a conversations tracker

* delDelete tracker for a specific conversation

* postAppend events to a tracker

* putReplace a trackers events

* getRetrieve an end-to-end story corresponding to a conversation

* postRun an action in a conversation

* postInject an intent into a conversation

* postPredict the next action

* postAdd a message to a tracker

* Model

* postTrain a Rasa model

* postEvaluate stories

* postPerform an intent evaluation

* postPredict an action on a temporary state

* postParse a message using the Rasa model

* putReplace the currently loaded model

* delUnload the trained model

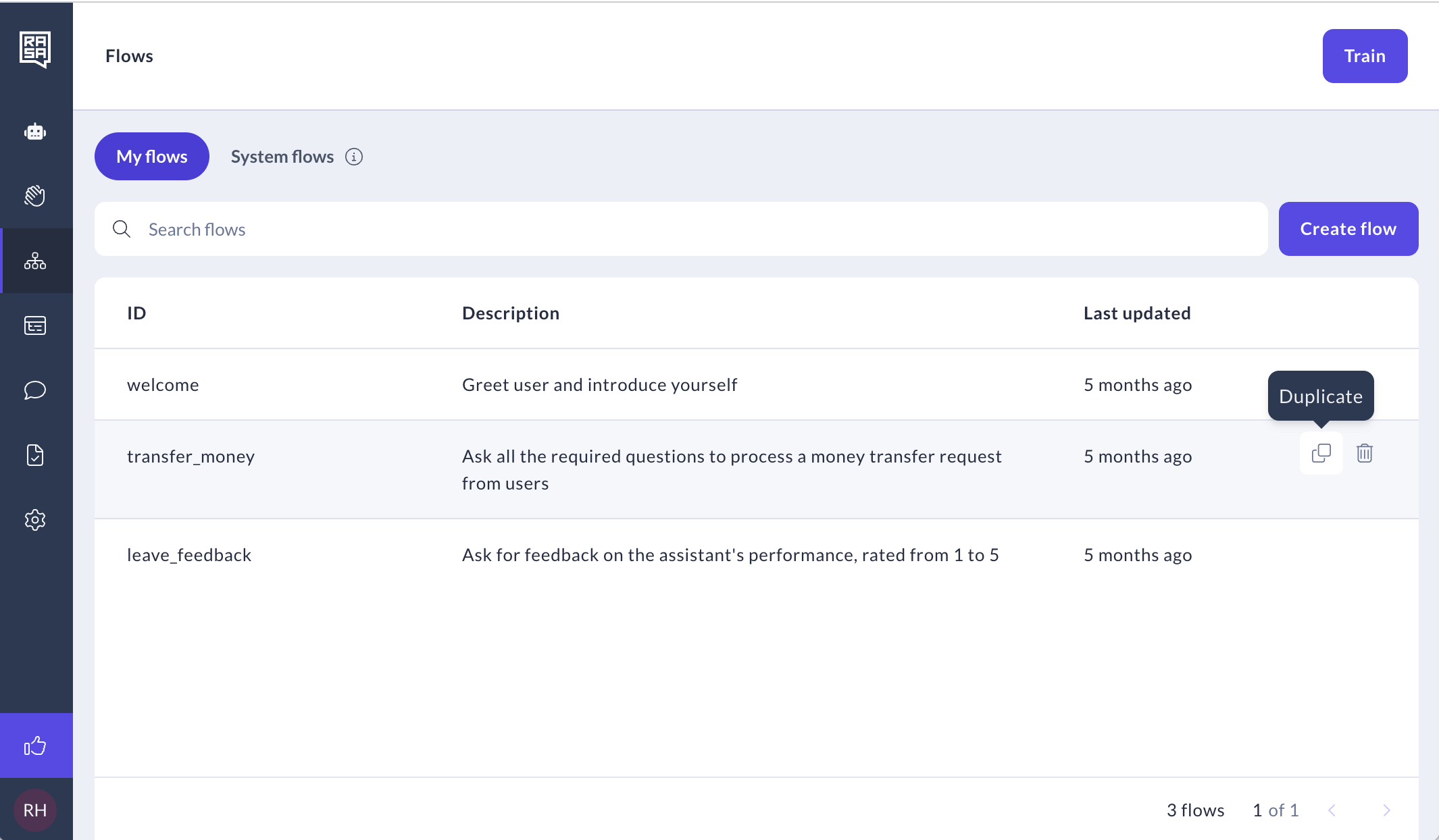

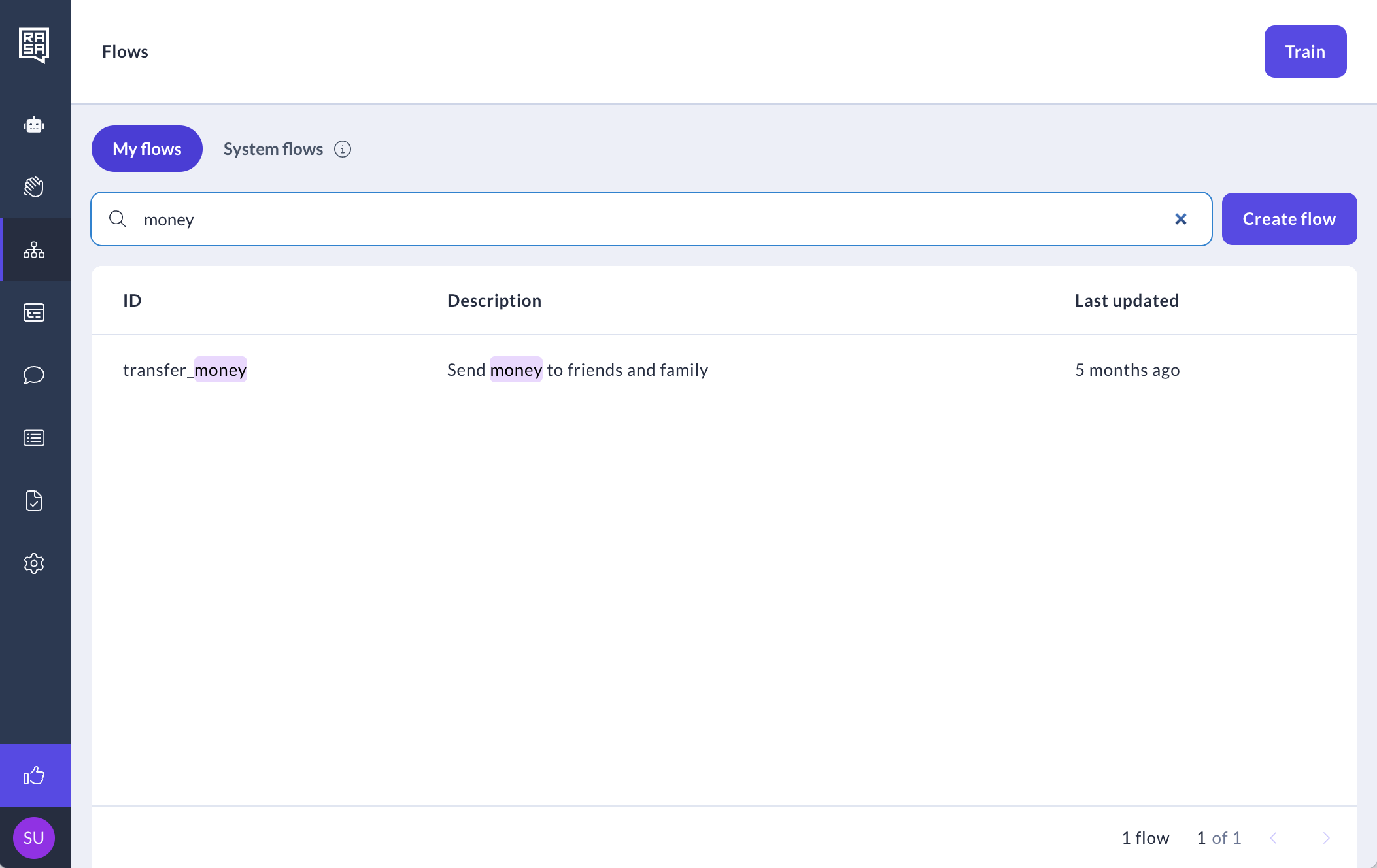

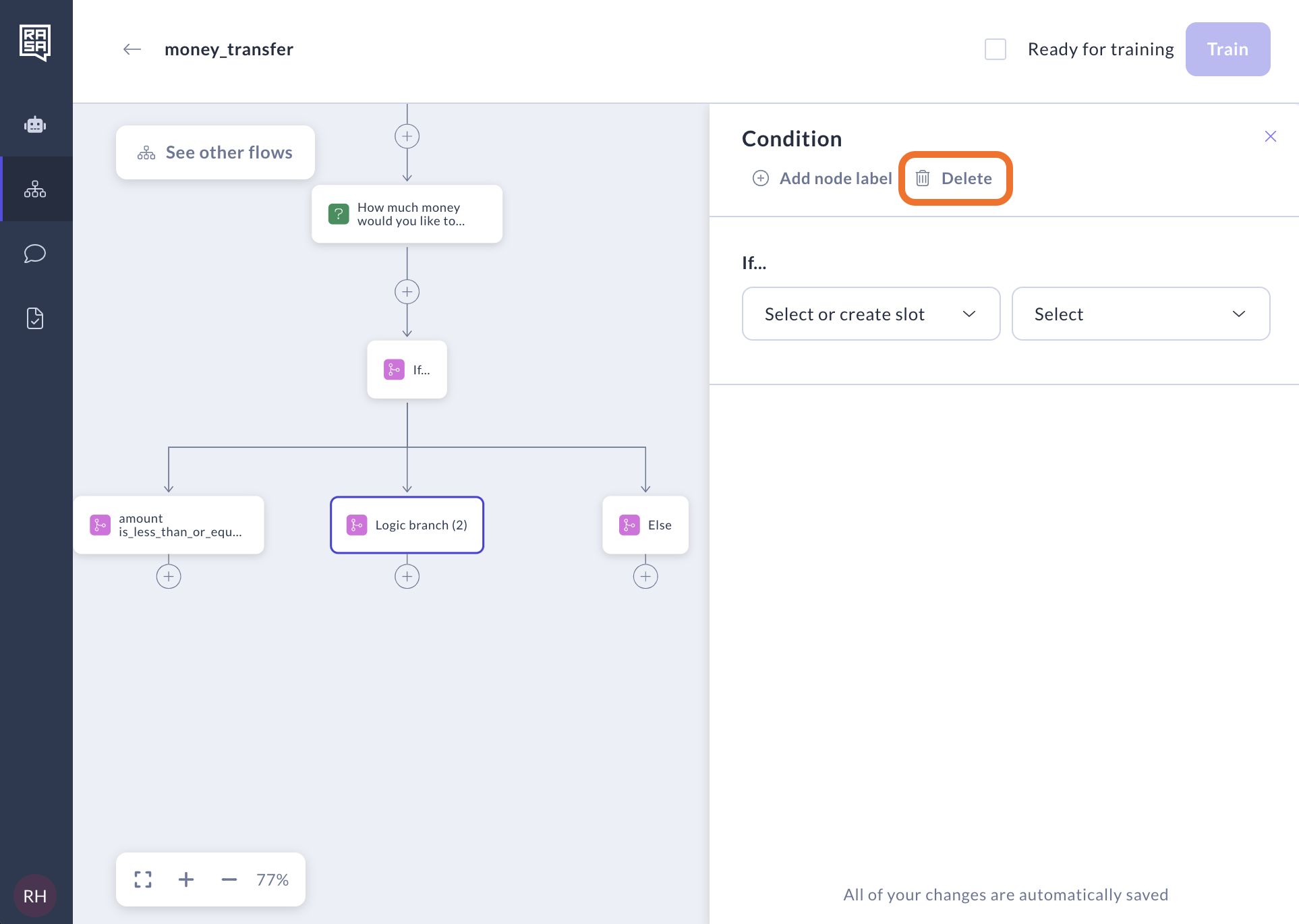

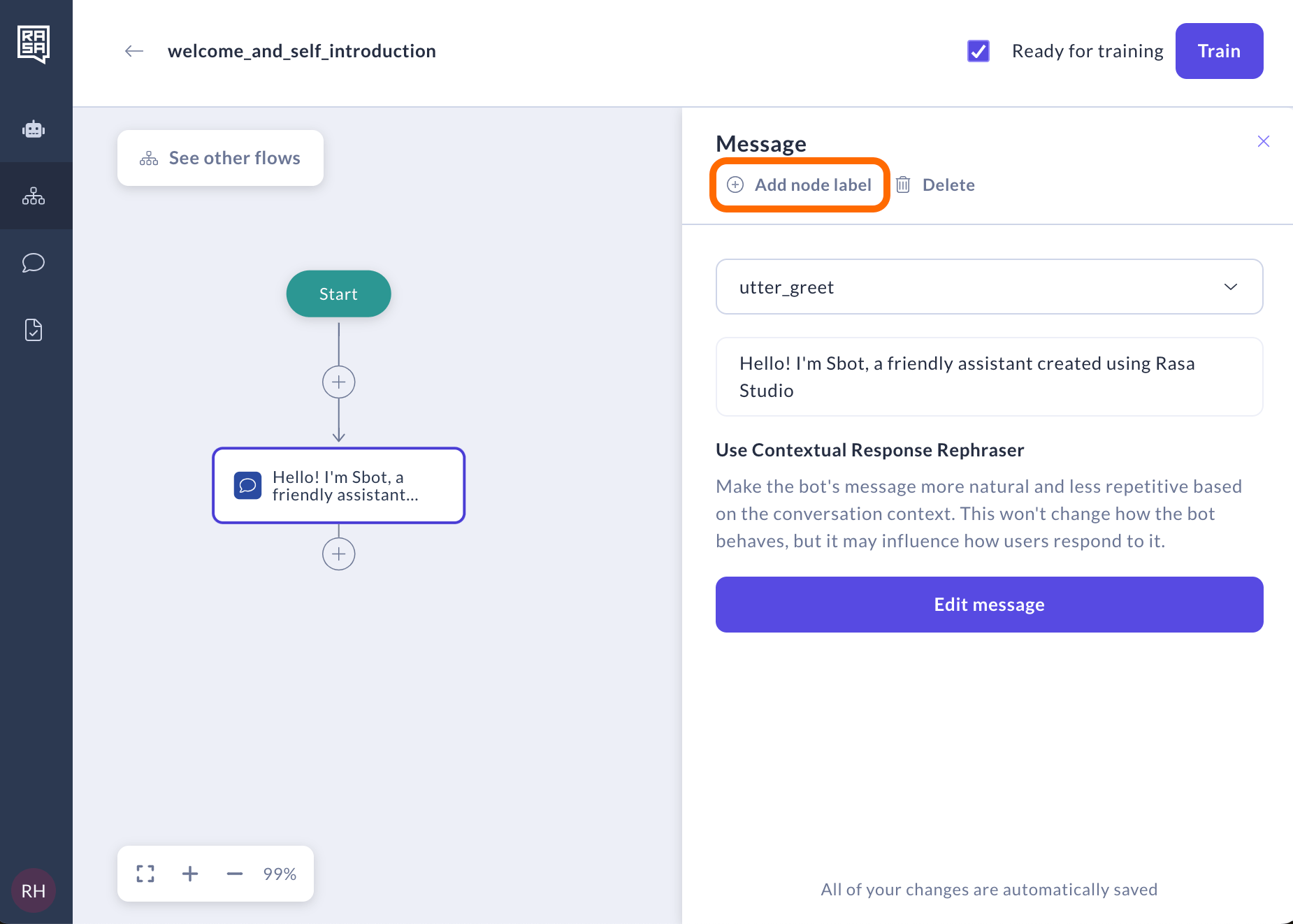

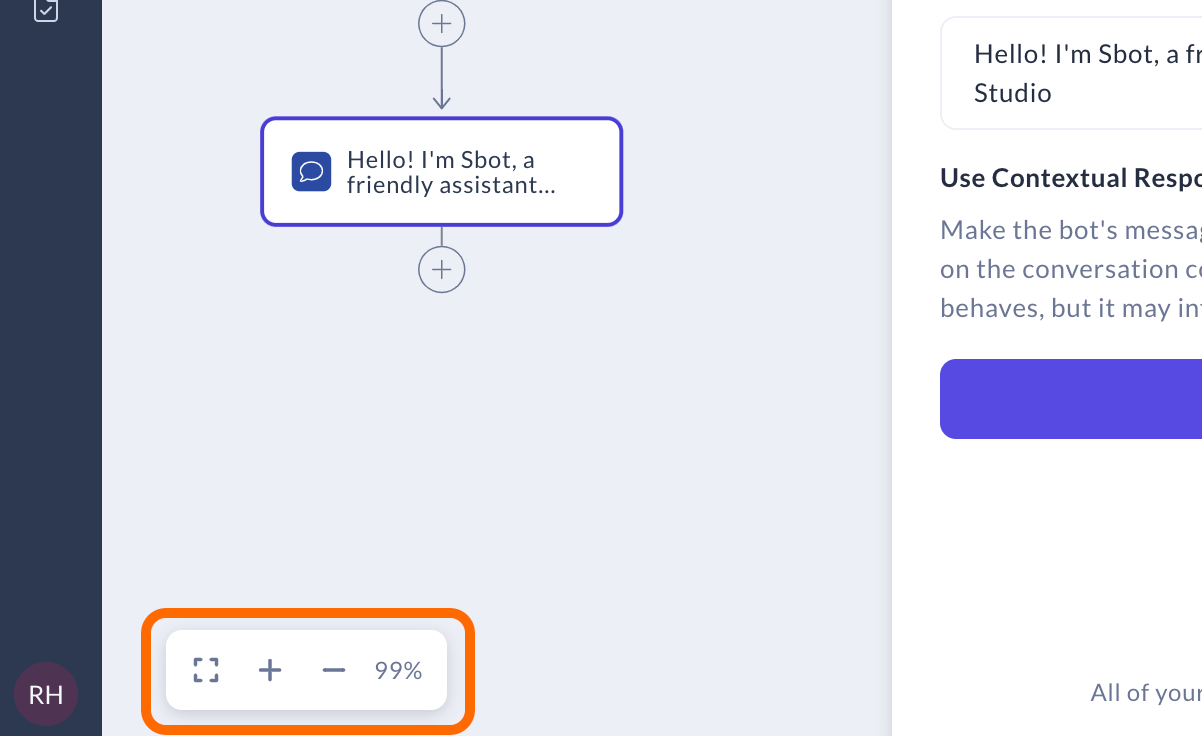

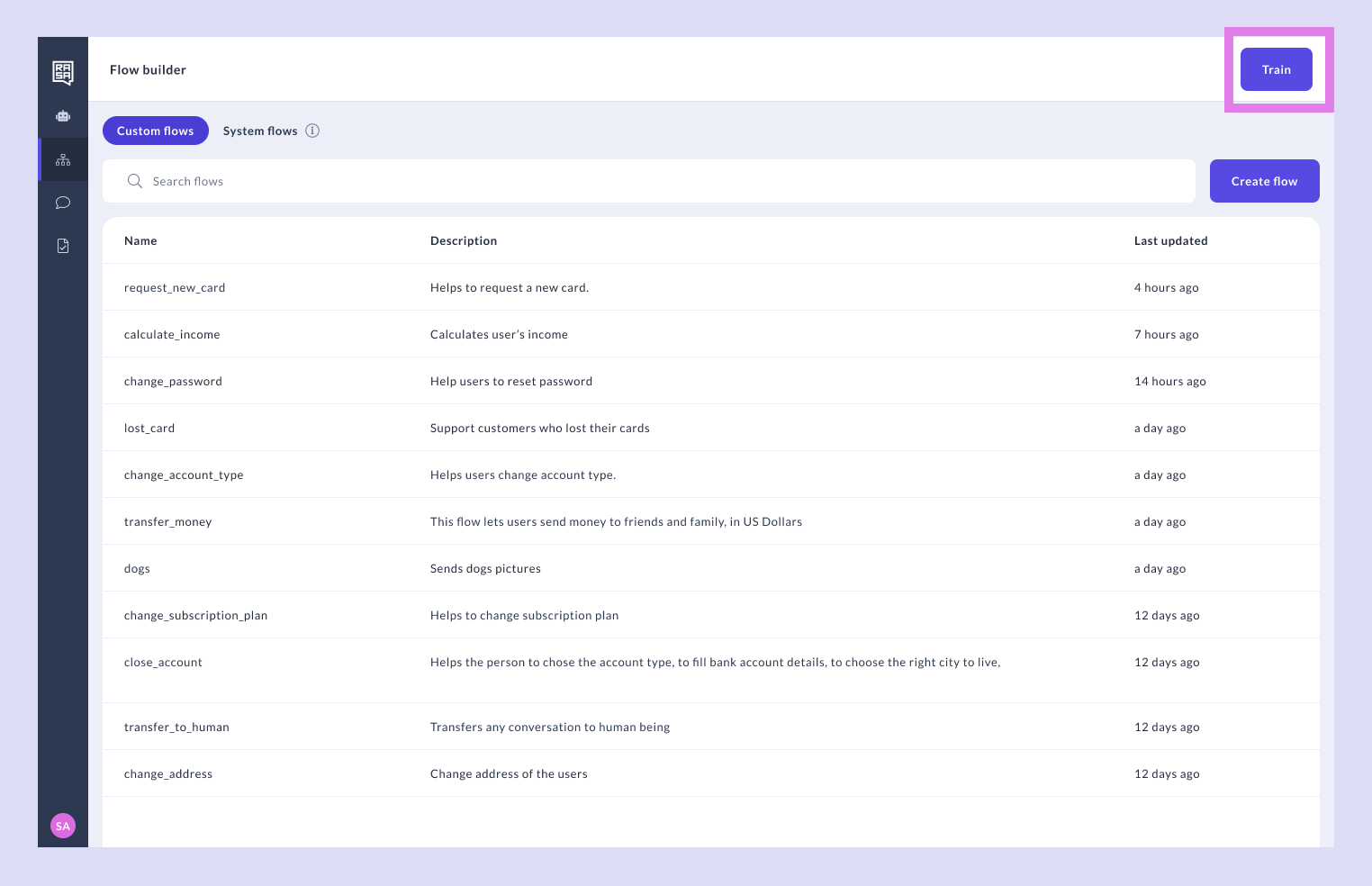

* Flows

* getRetrieve the flows of the assistant

* Domain

* getRetrieve the loaded domain

* Channel Webhooks

* postPost user message from a REST channel

* postPost user message from a custom channel

[API docs by Redocly](https://redocly.com/redoc/)

Download OpenAPI specification:[Download](https://rasa.com/docs/redocusaurus/pro.yaml)

The Rasa server provides endpoints to retrieve trackers of conversations as well as endpoints to modify them. Additionally, endpoints for training and testing models are provided.

#### [](#tag/Server-Information)Server Information

#### [](#tag/Server-Information/operation/getHealth)Health endpoint of Rasa Server

This URL can be used as an endpoint to run health checks against. When the server is running this will return 200.

##### Responses

**200**

Up and running

get/

Local development server

http://localhost:5005/

##### Response samples

* 200

Content type

text/plain

Copy

```

Hello from Rasa: 1.0.0

```

#### [](#tag/Server-Information/operation/getLicense)Information about your Rasa Pro License

Returns the license information about your Rasa Pro License

##### Responses

**200**

Rasa Pro License Information

get/license

Local development server

http://localhost:5005/license

##### Response samples

* 200

Content type

application/json

Copy

`{

"id": "u5fn8888-e213-4c12-9542-0baslfdkjas",

"company": "acme",

"scope": "rasa:pro rasa:voice",

"email": "acme@email.com",

"expires": "2026-01-01T00:00:00+00:00"

}`

#### [](#tag/Server-Information/operation/getVersion)Version of Rasa

Returns the version of Rasa.

##### Responses

**200**

Version of Rasa

get/version

Local development server

http://localhost:5005/version

##### Response samples

* 200

Content type

application/json

Copy

`{

"version": "1.0.0",

"minimum_compatible_version": "1.0.0"

}`

#### [](#tag/Server-Information/operation/getStatus)Status of the Rasa server

Information about the server and the currently loaded Rasa model.

###### Authorizations:

*TokenAuth\*\*JWT*

##### Responses

**200**

Success

**401**

User is not authenticated.

**403**

User has insufficient permission.

**409**

The request conflicts with the currently loaded model.

get/status

Local development server

http://localhost:5005/status

##### Response samples

* 200

* 401

* 403

* 409

Content type

application/json

Copy

`{

"model_id": "75a985b7b86d442ca013d61ea4781b22",

"model_file": "20190429-103105.tar.gz",

"num_active_training_jobs": 2

}`

#### [](#tag/Tracker)Tracker

#### [](#tag/Tracker/operation/getConversationTracker)Retrieve a conversations tracker

The tracker represents the state of the conversation. The state of the tracker is created by applying a sequence of events, which modify the state. These events can optionally be included in the response.

###### Authorizations:

*TokenAuth\*\*JWT*

###### path Parameters

| | |

| ------------------------ | ----------------------------------------------------------- |

| conversation\_idrequired | stringExample:defaultId of the conversation |

###### query Parameters

| | |

| --------------- | ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------ |

| include\_events | stringDefault:"AFTER\_RESTART"Enum: "ALL" "APPLIED" "AFTER\_RESTART" "NONE"Example:include\_events=AFTER\_RESTARTSpecify which events of the tracker the response should contain.-`ALL` - every logged event.

-`APPLIED` - only events that contribute to the trackers state. This excludes reverted utterances and actions that got undone.

-`AFTER_RESTART` - all events since the last `restarted` event. This includes utterances that got reverted and actions that got undone.

-`NONE` - no events. |

| until | numberDefault:"None"Example:until=1559744410All events previous to the passed timestamp will be replayed. Events that occur exactly at the target time will be included. |

##### Responses

**200**

Success

**400**

Bad Request

**401**

User is not authenticated.

**403**

User has insufficient permission.

**409**

The request conflicts with the currently loaded model.

**500**

An unexpected error occurred.

get/conversations/{conversation\_id}/tracker

Local development server

http://localhost:5005/conversations/{conversation\_id}/tracker

##### Response samples

* 200

* 400

* 401

* 403

* 409

* 500

Content type

application/json

Copy

Expand all Collapse all

`{

"sender_id": "default",

"slots": [

{

"slot_name": "slot_value"

}

],

"latest_message": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

},

"latest_event_time": 1537645578.314389,

"followup_action": "string",

"paused": false,

"stack": [

{

"frame_id": "8UJPHH5C",

"flow_id": "transfer_money",

"step_id": "START",

"frame_type": "regular",

"type": "flow"

}

],

"events": [

{

"event": "user",

"timestamp": null,

"metadata": {

"arbitrary_metadata_key": "some string",

"more_metadata": 1

},

"text": "string",

"input_channel": "string",

"message_id": "string",

"parse_data": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

}

}

],

"latest_input_channel": "rest",

"latest_action_name": "action_listen",

"latest_action": {

"action_name": "string",

"action_text": "string"

},

"active_loop": {

"name": "restaurant_form"

}

}`

#### [](#tag/Tracker/operation/deleteConversationTracker)Delete tracker for a specific conversation

Deletes the tracker of a conversation.

###### Authorizations:

*TokenAuth\*\*JWT*

###### path Parameters

| | |

| ------------------------ | ----------------------------------------------------------- |

| conversation\_idrequired | stringExample:defaultId of the conversation |

##### Responses

**204**

Tracker successfully deleted.

**401**

User is not authenticated.

**403**

User has insufficient permission.

**404**

Conversation not found.

**409**

The request conflicts with the currently loaded model.

**500**

An unexpected error occurred.

delete/conversations/{conversation\_id}/tracker

Local development server

http://localhost:5005/conversations/{conversation\_id}/tracker

##### Response samples

* 401

* 403

* 409

* 500

Content type

application/json

Copy

`{

"version": "1.0.0",

"status": "failure",

"reason": "NotAuthenticated",

"message": "User is not authenticated to access resource.",

"code": 401

}`

#### [](#tag/Tracker/operation/addConversationTrackerEvents)Append events to a tracker

Appends one or multiple new events to the tracker state of the conversation. Any existing events will be kept and the new events will be appended, updating the existing state. If events are appended to a new conversation ID, the tracker will be initialised with a new session.

###### Authorizations:

*TokenAuth\*\*JWT*

###### path Parameters

| | |

| ------------------------ | ----------------------------------------------------------- |

| conversation\_idrequired | stringExample:defaultId of the conversation |

###### query Parameters

| | |

| ---------------------- | ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------ |

| include\_events | stringDefault:"AFTER\_RESTART"Enum: "ALL" "APPLIED" "AFTER\_RESTART" "NONE"Example:include\_events=AFTER\_RESTARTSpecify which events of the tracker the response should contain.-`ALL` - every logged event.

-`APPLIED` - only events that contribute to the trackers state. This excludes reverted utterances and actions that got undone.

-`AFTER_RESTART` - all events since the last `restarted` event. This includes utterances that got reverted and actions that got undone.

-`NONE` - no events. |

| output\_channel | stringEnum: "latest" "slack" "callback" "facebook" "rocketchat" "telegram" "twilio" "webexteams" "socketio"Example:output\_channel=slackThe bot's utterances will be forwarded to this channel. It uses the credentials listed in `credentials.yml` to connect. In case the channel does not support this, the utterances will be returned in the response body. Use `latest` to try to send the messages to the latest channel the user used. Currently supported channels are listed in the permitted values for the parameter. |

| execute\_side\_effects | booleanDefault:falseIf `true`, any `BotUttered` event will be forwarded to the channel specified in the `output_channel` parameter. Any `ReminderScheduled` or `ReminderCancelled` event will also be processed. |

###### Request Body schema: application/jsonrequired

One of

EventEventList

Any of

UserEventBotEventSessionStartedEventActionEventSlotEventResetSlotsEventRestartEventReminderEventCancelReminderEventPauseEventResumeEventFollowupEventExportEventUndoEventRewindEventAgentEventEntitiesAddedEventUserFeaturizationEventActionExecutionRejectedEventFormValidationEventLoopInterruptedEventFormEventActiveLoopEvent

| | |

| -------------- | -------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| eventrequired | stringValue: "user"Event name |

| timestamp | integerTime of application |

| metadata | object |

| text | string or nullText of user message. |

| input\_channel | string or null |

| message\_id | string or null |

| parse\_data | object (ParseResult)NLU parser information. If set, message will not be passed through NLU, but instead this parsing information will be used. |

##### Responses

**200**

Success

**400**

Bad Request

**401**

User is not authenticated.

**403**

User has insufficient permission.

**409**

The request conflicts with the currently loaded model.

**500**

An unexpected error occurred.

post/conversations/{conversation\_id}/tracker/events

Local development server

http://localhost:5005/conversations/{conversation\_id}/tracker/events

##### Request samples

* Payload

Content type

application/json

Example

EventEvent

Copy

Expand all Collapse all

`{

"event": "user",

"timestamp": null,

"metadata": {

"arbitrary_metadata_key": "some string",

"more_metadata": 1

},

"text": "string",

"input_channel": "string",

"message_id": "string",

"parse_data": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

}

}`

##### Response samples

* 200

* 400

* 401

* 403

* 409

* 500

Content type

application/json

Copy

Expand all Collapse all

`{

"sender_id": "default",

"slots": [

{

"slot_name": "slot_value"

}

],

"latest_message": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

},

"latest_event_time": 1537645578.314389,

"followup_action": "string",

"paused": false,

"stack": [

{

"frame_id": "8UJPHH5C",

"flow_id": "transfer_money",

"step_id": "START",

"frame_type": "regular",

"type": "flow"

}

],

"events": [

{

"event": "user",

"timestamp": null,

"metadata": {

"arbitrary_metadata_key": "some string",

"more_metadata": 1

},

"text": "string",

"input_channel": "string",

"message_id": "string",

"parse_data": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

}

}

],

"latest_input_channel": "rest",

"latest_action_name": "action_listen",

"latest_action": {

"action_name": "string",

"action_text": "string"

},

"active_loop": {

"name": "restaurant_form"

}

}`

#### [](#tag/Tracker/operation/replaceConversationTrackerEvents)Replace a trackers events

Replaces all events of a tracker with the passed list of events. This endpoint should not be used to modify trackers in a production setup, but rather for creating training data.

###### Authorizations:

*TokenAuth\*\*JWT*

###### path Parameters

| | |

| ------------------------ | ----------------------------------------------------------- |

| conversation\_idrequired | stringExample:defaultId of the conversation |

###### query Parameters

| | |

| --------------- | ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------ |

| include\_events | stringDefault:"AFTER\_RESTART"Enum: "ALL" "APPLIED" "AFTER\_RESTART" "NONE"Example:include\_events=AFTER\_RESTARTSpecify which events of the tracker the response should contain.-`ALL` - every logged event.

-`APPLIED` - only events that contribute to the trackers state. This excludes reverted utterances and actions that got undone.

-`AFTER_RESTART` - all events since the last `restarted` event. This includes utterances that got reverted and actions that got undone.

-`NONE` - no events. |

###### Request Body schema: application/jsonrequired

Array

Any of

UserEventBotEventSessionStartedEventActionEventSlotEventResetSlotsEventRestartEventReminderEventCancelReminderEventPauseEventResumeEventFollowupEventExportEventUndoEventRewindEventAgentEventEntitiesAddedEventUserFeaturizationEventActionExecutionRejectedEventFormValidationEventLoopInterruptedEventFormEventActiveLoopEvent

| | |

| -------------- | -------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| eventrequired | stringValue: "user"Event name |

| timestamp | integerTime of application |

| metadata | object |

| text | string or nullText of user message. |

| input\_channel | string or null |

| message\_id | string or null |

| parse\_data | object (ParseResult)NLU parser information. If set, message will not be passed through NLU, but instead this parsing information will be used. |

##### Responses

**200**

Success

**400**

Bad Request

**401**

User is not authenticated.

**403**

User has insufficient permission.

**409**

The request conflicts with the currently loaded model.

**500**

An unexpected error occurred.

put/conversations/{conversation\_id}/tracker/events

Local development server

http://localhost:5005/conversations/{conversation\_id}/tracker/events

##### Request samples

* Payload

Content type

application/json

Copy

Expand all Collapse all

`[

{

"event": "user",

"timestamp": null,

"metadata": {

"arbitrary_metadata_key": "some string",

"more_metadata": 1

},

"text": "string",

"input_channel": "string",

"message_id": "string",

"parse_data": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

}

}

]`

##### Response samples

* 200

* 400

* 401

* 403

* 409

* 500

Content type

application/json

Copy

Expand all Collapse all

`{

"sender_id": "default",

"slots": [

{

"slot_name": "slot_value"

}

],

"latest_message": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

},

"latest_event_time": 1537645578.314389,

"followup_action": "string",

"paused": false,

"stack": [

{

"frame_id": "8UJPHH5C",

"flow_id": "transfer_money",

"step_id": "START",

"frame_type": "regular",

"type": "flow"

}

],

"events": [

{

"event": "user",

"timestamp": null,

"metadata": {

"arbitrary_metadata_key": "some string",

"more_metadata": 1

},

"text": "string",

"input_channel": "string",

"message_id": "string",

"parse_data": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

}

}

],

"latest_input_channel": "rest",

"latest_action_name": "action_listen",

"latest_action": {

"action_name": "string",

"action_text": "string"

},

"active_loop": {

"name": "restaurant_form"

}

}`

#### [](#tag/Tracker/operation/getConversationStory)Retrieve an end-to-end story corresponding to a conversation

The story represents the whole conversation in end-to-end format. This can be posted to the '/test/stories' endpoint and used as a test.

###### Authorizations:

*TokenAuth\*\*JWT*

###### path Parameters

| | |

| ------------------------ | ----------------------------------------------------------- |

| conversation\_idrequired | stringExample:defaultId of the conversation |

###### query Parameters

| | |

| ------------- | ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| until | numberDefault:"None"Example:until=1559744410All events previous to the passed timestamp will be replayed. Events that occur exactly at the target time will be included. |

| all\_sessions | booleanDefault:falseWhether to fetch all sessions in a conversation, or only the latest session- `true` - fetch all conversation sessions.

- `false` - \[default] fetch only the latest conversation session. |

##### Responses

**200**

Success

**401**

User is not authenticated.

**403**

User has insufficient permission.

**409**

The request conflicts with the currently loaded model.

**500**

An unexpected error occurred.

get/conversations/{conversation\_id}/story

Local development server

http://localhost:5005/conversations/{conversation\_id}/story

##### Response samples

* 200

* 401

* 403

* 409

* 500

Content type

text/yml

Copy

```

- story: story_00055028

steps:

- user: |

hello

intent: greet

- action: utter_ask_howcanhelp

- user: |

I'm looking for a [moderately priced]{"entity": "price", "value": "moderate"} [Indian]{"entity": "cuisine"} restaurant for [two]({"entity": "people"}) people

intent: inform

- action: utter_on_it

- action: utter_ask_location

```

#### [](#tag/Tracker/operation/executeConversationAction)Run an action in a conversation Deprecated

DEPRECATED. Runs the action, calling the action server if necessary. Any responses sent by the executed action will be forwarded to the channel specified in the output\_channel parameter. If no output channel is specified, any messages that should be sent to the user will be included in the response of this endpoint.

###### Authorizations:

*TokenAuth\*\*JWT*

###### path Parameters

| | |

| ------------------------ | ----------------------------------------------------------- |

| conversation\_idrequired | stringExample:defaultId of the conversation |

###### query Parameters

| | |

| --------------- | ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------ |

| include\_events | stringDefault:"AFTER\_RESTART"Enum: "ALL" "APPLIED" "AFTER\_RESTART" "NONE"Example:include\_events=AFTER\_RESTARTSpecify which events of the tracker the response should contain.-`ALL` - every logged event.

-`APPLIED` - only events that contribute to the trackers state. This excludes reverted utterances and actions that got undone.

-`AFTER_RESTART` - all events since the last `restarted` event. This includes utterances that got reverted and actions that got undone.

-`NONE` - no events. |

| output\_channel | stringEnum: "latest" "slack" "callback" "facebook" "rocketchat" "telegram" "twilio" "webexteams" "socketio"Example:output\_channel=slackThe bot's utterances will be forwarded to this channel. It uses the credentials listed in `credentials.yml` to connect. In case the channel does not support this, the utterances will be returned in the response body. Use `latest` to try to send the messages to the latest channel the user used. Currently supported channels are listed in the permitted values for the parameter. |

###### Request Body schema: application/jsonrequired

| | |

| ------------ | ----------------------------------------------------------- |

| namerequired | stringName of the action to be executed. |

| policy | string or nullName of the policy that predicted the action. |

| confidence | number or nullConfidence of the prediction. |

##### Responses

**200**

Success

**400**

Bad Request

**401**

User is not authenticated.

**403**

User has insufficient permission.

**409**

The request conflicts with the currently loaded model.

**500**

An unexpected error occurred.

post/conversations/{conversation\_id}/execute

Local development server

http://localhost:5005/conversations/{conversation\_id}/execute

##### Request samples

* Payload

Content type

application/json

Copy

`{

"name": "utter_greet",

"policy": "string",

"confidence": 0.987232

}`

##### Response samples

* 200

* 400

* 401

* 403

* 409

* 500

Content type

application/json

Copy

Expand all Collapse all

`{

"tracker": {

"sender_id": "default",

"slots": [

{

"slot_name": "slot_value"

}

],

"latest_message": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

},

"latest_event_time": 1537645578.314389,

"followup_action": "string",

"paused": false,

"stack": [

{

"frame_id": "8UJPHH5C",

"flow_id": "transfer_money",

"step_id": "START",

"frame_type": "regular",

"type": "flow"

}

],

"events": [

{

"event": "user",

"timestamp": null,

"metadata": {

"arbitrary_metadata_key": "some string",

"more_metadata": 1

},

"text": "string",

"input_channel": "string",

"message_id": "string",

"parse_data": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

}

}

],

"latest_input_channel": "rest",

"latest_action_name": "action_listen",

"latest_action": {

"action_name": "string",

"action_text": "string"

},

"active_loop": {

"name": "restaurant_form"

}

},

"messages": [

{

"recipient_id": "string",

"text": "string",

"image": "string",

"buttons": [

{

"title": "string",

"payload": "string"

}

],

"attachement": [

{

"title": "string",

"payload": "string"

}

]

}

]

}`

#### [](#tag/Tracker/operation/triggerConversationIntent)Inject an intent into a conversation

Sends a specified intent and list of entities in place of a user message. The bot then predicts and executes a response action. Any responses sent by the executed action will be forwarded to the channel specified in the `output_channel` parameter. If no output channel is specified, any messages that should be sent to the user will be included in the response of this endpoint.

###### Authorizations:

*TokenAuth\*\*JWT*

###### path Parameters

| | |

| ------------------------ | ----------------------------------------------------------- |

| conversation\_idrequired | stringExample:defaultId of the conversation |

###### query Parameters

| | |

| --------------- | ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------ |

| include\_events | stringDefault:"AFTER\_RESTART"Enum: "ALL" "APPLIED" "AFTER\_RESTART" "NONE"Example:include\_events=AFTER\_RESTARTSpecify which events of the tracker the response should contain.-`ALL` - every logged event.

-`APPLIED` - only events that contribute to the trackers state. This excludes reverted utterances and actions that got undone.

-`AFTER_RESTART` - all events since the last `restarted` event. This includes utterances that got reverted and actions that got undone.

-`NONE` - no events. |

| output\_channel | stringEnum: "latest" "slack" "callback" "facebook" "rocketchat" "telegram" "twilio" "webexteams" "socketio"Example:output\_channel=slackThe bot's utterances will be forwarded to this channel. It uses the credentials listed in `credentials.yml` to connect. In case the channel does not support this, the utterances will be returned in the response body. Use `latest` to try to send the messages to the latest channel the user used. Currently supported channels are listed in the permitted values for the parameter. |

###### Request Body schema: application/jsonrequired

| | |

| ------------ | ---------------------------------------- |

| namerequired | stringName of the intent to be executed. |

| entities | object or nullEntities to be passed on. |

##### Responses

**200**

Success

**400**

Bad Request

**401**

User is not authenticated.

**403**

User has insufficient permission.

**409**

The request conflicts with the currently loaded model.

**500**

An unexpected error occurred.

post/conversations/{conversation\_id}/trigger\_intent

Local development server

http://localhost:5005/conversations/{conversation\_id}/trigger\_intent

##### Request samples

* Payload

Content type

application/json

Copy

Expand all Collapse all

`{

"name": "greet",

"entities": {

"temperature": "high"

}

}`

##### Response samples

* 200

* 400

* 401

* 403

* 409

* 500

Content type

application/json

Copy

Expand all Collapse all

`{

"tracker": {

"sender_id": "default",

"slots": [

{

"slot_name": "slot_value"

}

],

"latest_message": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

},

"latest_event_time": 1537645578.314389,

"followup_action": "string",

"paused": false,

"stack": [

{

"frame_id": "8UJPHH5C",

"flow_id": "transfer_money",

"step_id": "START",

"frame_type": "regular",

"type": "flow"

}

],

"events": [

{

"event": "user",

"timestamp": null,

"metadata": {

"arbitrary_metadata_key": "some string",

"more_metadata": 1

},

"text": "string",

"input_channel": "string",

"message_id": "string",

"parse_data": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

}

}

],

"latest_input_channel": "rest",

"latest_action_name": "action_listen",

"latest_action": {

"action_name": "string",

"action_text": "string"

},

"active_loop": {

"name": "restaurant_form"

}

},

"messages": [

{

"recipient_id": "string",

"text": "string",

"image": "string",

"buttons": [

{

"title": "string",

"payload": "string"

}

],

"attachement": [

{

"title": "string",

"payload": "string"

}

]

}

]

}`

#### [](#tag/Tracker/operation/predictConversationAction)Predict the next action

Runs the conversations tracker through the model's policies to predict the scores of all actions present in the model's domain. Actions are returned in the 'scores' array, sorted on their 'score' values. The state of the tracker is not modified.

###### Authorizations:

*TokenAuth\*\*JWT*

###### path Parameters

| | |

| ------------------------ | ----------------------------------------------------------- |

| conversation\_idrequired | stringExample:defaultId of the conversation |

##### Responses

**200**

Success

**400**

Bad Request

**401**

User is not authenticated.

**403**

User has insufficient permission.

**409**

The request conflicts with the currently loaded model.

**500**

An unexpected error occurred.

post/conversations/{conversation\_id}/predict

Local development server

http://localhost:5005/conversations/{conversation\_id}/predict

##### Response samples

* 200

* 400

* 401

* 403

* 409

* 500

Content type

application/json

Copy

Expand all Collapse all

`{

"scores": [

{

"action": "utter_greet",

"score": 1

}

],

"policy": "policy_2_TEDPolicy",

"confidence": 0.057,

"tracker": {

"sender_id": "default",

"slots": [

{

"slot_name": "slot_value"

}

],

"latest_message": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

},

"latest_event_time": 1537645578.314389,

"followup_action": "string",

"paused": false,

"stack": [

{

"frame_id": "8UJPHH5C",

"flow_id": "transfer_money",

"step_id": "START",

"frame_type": "regular",

"type": "flow"

}

],

"events": [

{

"event": "user",

"timestamp": null,

"metadata": {

"arbitrary_metadata_key": "some string",

"more_metadata": 1

},

"text": "string",

"input_channel": "string",

"message_id": "string",

"parse_data": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

}

}

],

"latest_input_channel": "rest",

"latest_action_name": "action_listen",

"latest_action": {

"action_name": "string",

"action_text": "string"

},

"active_loop": {

"name": "restaurant_form"

}

}

}`

#### [](#tag/Tracker/operation/addConversationMessage)Add a message to a tracker

Adds a message to a tracker. This doesn't trigger the prediction loop. It will log the message on the tracker and return, no actions will be predicted or run. This is often used together with the predict endpoint.

###### Authorizations:

*TokenAuth\*\*JWT*

###### path Parameters

| | |

| ------------------------ | ----------------------------------------------------------- |

| conversation\_idrequired | stringExample:defaultId of the conversation |

###### query Parameters

| | |

| --------------- | ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------ |

| include\_events | stringDefault:"AFTER\_RESTART"Enum: "ALL" "APPLIED" "AFTER\_RESTART" "NONE"Example:include\_events=AFTER\_RESTARTSpecify which events of the tracker the response should contain.-`ALL` - every logged event.

-`APPLIED` - only events that contribute to the trackers state. This excludes reverted utterances and actions that got undone.

-`AFTER_RESTART` - all events since the last `restarted` event. This includes utterances that got reverted and actions that got undone.

-`NONE` - no events. |

###### Request Body schema: application/jsonrequired

| | |

| -------------- | -------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| textrequired | stringMessage text |

| senderrequired | stringValue: "user"Origin of the message - who sent it |

| parse\_data | object (ParseResult)NLU parser information. If set, message will not be passed through NLU, but instead this parsing information will be used. |

##### Responses

**200**

Success

**400**

Bad Request

**401**

User is not authenticated.

**403**

User has insufficient permission.

**409**

The request conflicts with the currently loaded model.

**500**

An unexpected error occurred.

post/conversations/{conversation\_id}/messages

Local development server

http://localhost:5005/conversations/{conversation\_id}/messages

##### Request samples

* Payload

Content type

application/json

Copy

Expand all Collapse all

`{

"text": "Hello!",

"sender": "user",

"parse_data": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

}

}`

##### Response samples

* 200

* 400

* 401

* 403

* 409

* 500

Content type

application/json

Copy

Expand all Collapse all

`{

"sender_id": "default",

"slots": [

{

"slot_name": "slot_value"

}

],

"latest_message": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

},

"latest_event_time": 1537645578.314389,

"followup_action": "string",

"paused": false,

"stack": [

{

"frame_id": "8UJPHH5C",

"flow_id": "transfer_money",

"step_id": "START",

"frame_type": "regular",

"type": "flow"

}

],

"events": [

{

"event": "user",

"timestamp": null,

"metadata": {

"arbitrary_metadata_key": "some string",

"more_metadata": 1

},

"text": "string",

"input_channel": "string",

"message_id": "string",

"parse_data": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

}

}

],

"latest_input_channel": "rest",

"latest_action_name": "action_listen",

"latest_action": {

"action_name": "string",

"action_text": "string"

},

"active_loop": {

"name": "restaurant_form"

}

}`

#### [](#tag/Model)Model

#### [](#tag/Model/operation/trainModel)Train a Rasa model

Trains a new Rasa model. Depending on the data given only a dialogue model, only a NLU model, or a model combining a trained dialogue model with an NLU model will be trained. The new model is not loaded by default.

###### Authorizations:

*TokenAuth\*\*JWT*

###### query Parameters

| | |

| ----------------------------------- | --------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| save\_to\_default\_model\_directory | booleanDefault:trueIf `true` (default) the trained model will be saved in the default model directory, if `false` it will be saved in a temporary directory |

| force\_training | booleanDefault:falseForce a model training even if the data has not changed |

| augmentation | stringDefault:50How much data augmentation to use during training |

| num\_threads | stringDefault:1Maximum amount of threads to use when training |

| callback\_url | stringDefault:"None"Example:callback\_url=https://example.com/rasa\_evaluationsIf specified the call will return immediately with an empty response and status code 204. The actual result or any errors will be sent to the given callback URL as the body of a post request. |

###### Request Body schema: application/yamlrequired

The training data should be in YAML format.

| | |

| ----------------------------------- | ------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| pipeline | Array of arraysPipeline list |

| policies | Array of arraysPolicies list |

| entities | Array of arraysEntity list |

| slots | Array of arraysSlots list |

| actions | Array of arraysAction list |

| forms | Array of arraysForms list |

| e2e\_actions | Array of arraysE2E Action list |

| responses | objectBot response templates |

| session\_config | objectSession configuration options |

| nlu | Array of arraysRasa NLU data, array of intents |

| rules | Array of arraysRule list |

| stories | Array of arraysRasa Core stories in YAML format |

| flows | objectRasa Pro flows in YAML format |

| force | booleanDeprecatedForce a model training even if the data has not changed |

| save\_to\_default\_model\_directory | booleanDeprecatedIf `true` (default) the trained model will be saved in the default model directory, if `false` it will be saved in a temporary directory |

##### Responses

**200**

Zipped Rasa model

**204**

The incoming request specified a `callback_url` and hence the request will return immediately with an empty response. The actual response will be sent to the provided `callback_url` via POST request.

**400**

Bad Request

**401**

User is not authenticated.

**403**

User has insufficient permission.

**500**

An unexpected error occurred.

post/model/train

Local development server

http://localhost:5005/model/train

##### Request samples

* Payload

Content type

application/yaml

Copy

```

pipeline: []

policies: []

intents:

- greet

- goodbye

entities: []

slots:

contacts_list:

type: text

mappings:

- type: custom

action: list_contacts

actions:

- list_contacts

forms: {}

e2e_actions: []

responses:

utter_greet:

- text: "Hey! How are you?"

utter_goodbye:

- text: "Bye"

utter_list_contacts:

- text: "You currently have the following contacts:\n{contacts_list}"

utter_no_contacts:

- text: "You have no contacts in your list."

session_config:

session_expiration_time: 60

carry_over_slots_to_new_session: true

nlu:

- intent: greet

examples: |

- hey

- hello

- intent: goodbye

examples: |

- bye

- goodbye

rules:

- rule: Say goodbye anytime the user says goodbye

steps:

- intent: goodbye

- action: utter_goodbye

stories:

- story: happy path

steps:

- intent: greet

- action: utter_greet

- intent: goodbye

- action: utter_goodbye

flows:

list_contacts:

name: list your contacts

description: show your contact list

steps:

- action: list_contacts

next:

- if: "slots.contacts_list"

then:

- action: utter_list_contacts

next: END

- else:

- action: utter_no_contacts

next: END

```

##### Response samples

* 400

* 401

* 403

* 500

Content type

application/json

Copy

`{

"version": "1.0.0",

"status": "failure",

"reason": "BadRequest",

"code": 400

}`

#### [](#tag/Model/operation/testModelStories)Evaluate stories

Evaluates one or multiple stories against the currently loaded Rasa model.

###### Authorizations:

*TokenAuth\*\*JWT*

###### query Parameters

| | |

| --- | ------------------------------------------------------------------------------------------- |

| e2e | booleanDefault:falsePerform an end-to-end evaluation on the posted stories. |

###### Request Body schema: text/ymlrequired

string (

StoriesTrainingData

)

Rasa Core stories in YAML format

##### Responses

**200**

Success

**400**

Bad Request

**401**

User is not authenticated.

**403**

User has insufficient permission.

**409**

The request conflicts with the currently loaded model.

**500**

An unexpected error occurred.

post/model/test/stories

Local development server

http://localhost:5005/model/test/stories

##### Response samples

* 200

* 400

* 401

* 403

* 409

* 500

Content type

application/json

Copy

Expand all Collapse all

`{

"actions": [

{

"action": "utter_ask_howcanhelp",

"predicted": "utter_ask_howcanhelp",

"policy": "policy_0_MemoizationPolicy",

"confidence": 1

}

],

"is_end_to_end_evaluation": true,

"precision": 1,

"f1": 0.9333333333333333,

"accuracy": 0.9,

"in_training_data_fraction": 0.8571428571428571,

"report": {

"conversation_accuracy": {

"accuracy": 0.19047619047619047,

"correct": 18,

"with_warnings": 1,

"total": 20

},

"property1": {

"intent_name": "string",

"classification_report": {

"greet": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100,

"confused_with": {

"chitchat": 3,

"nlu_fallback": 5

}

},

"micro avg": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100

},

"macro avg": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100

},

"weightedq avg": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100

}

}

},

"property2": {

"intent_name": "string",

"classification_report": {

"greet": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100,

"confused_with": {

"chitchat": 3,

"nlu_fallback": 5

}

},

"micro avg": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100

},

"macro avg": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100

},

"weightedq avg": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100

}

}

}

}

}`

#### [](#tag/Model/operation/testModelIntent)Perform an intent evaluation

Evaluates NLU model against a model or using cross-validation.

###### Authorizations:

*TokenAuth\*\*JWT*

###### query Parameters

| | |

| ------------------------ | ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| model | stringExample:model=rasa-model.tar.gzModel that should be used for evaluation. If the parameter is set, the model will be fetched with the currently loaded configuration setup. However, the currently loaded model will not be updated. The state of the server will not change. If the parameter is not set, the currently loaded model will be used for the evaluation. |

| callback\_url | stringDefault:"None"Example:callback\_url=https://example.com/rasa\_evaluationsIf specified the call will return immediately with an empty response and status code 204. The actual result or any errors will be sent to the given callback URL as the body of a post request. |

| cross\_validation\_folds | integerDefault:nullNumber of cross validation folds. If this parameter is specified the given training data will be used for a cross-validation instead of using it as test set for the specified model. Note that this is only supported for YAML data. |

###### Request Body schema:application/x-yamlapplication/x-yamlrequired

string

NLU training data and model configuration. The model configuration is only required if cross-validation is used.

##### Responses

**200**

NLU evaluation result

**204**

The incoming request specified a `callback_url` and hence the request will return immediately with an empty response. The actual response will be sent to the provided `callback_url` via POST request.

**400**

Bad Request

**401**

User is not authenticated.

**403**

User has insufficient permission.

**409**

The request conflicts with the currently loaded model.

**500**

An unexpected error occurred.

post/model/test/intents

Local development server

http://localhost:5005/model/test/intents

##### Request samples

* Payload

Content type

application/x-yamlapplication/x-yaml

No sample

##### Response samples

* 200

* 400

* 401

* 403

* 409

* 500

Content type

application/json

Copy

Expand all Collapse all

`{

"intent_evaluation": {

"report": {

"greet": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100,

"confused_with": {

"chitchat": 3,

"nlu_fallback": 5

}

},

"micro avg": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100

},

"macro avg": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100

},

"weightedq avg": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100

}

},

"accuracy": 0.19047619047619047,

"f1_score": 0.06095238095238095,

"precision": 0.036281179138321996,

"predictions": [

{

"intent": "greet",

"predicted": "greet",

"text": "hey",

"confidence": 0.9973567

}

],

"errors": [

{

"text": "are you alright?",

"intent_response_key_target": "string",

"intent_response_key_prediction": {

"confidence": 0.6323,

"name": "greet"

}

}

]

},

"response_selection_evaluation": {

"report": {

"greet": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100,

"confused_with": {

"chitchat": 3,

"nlu_fallback": 5

}

},

"micro avg": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100

},

"macro avg": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100

},

"weightedq avg": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100

}

},

"accuracy": 0.19047619047619047,

"f1_score": 0.06095238095238095,

"precision": 0.036281179138321996,

"predictions": [

{

"intent": "greet",

"predicted": "greet",

"text": "hey",

"confidence": 0.9973567

}

],

"errors": [

{

"text": "are you alright?",

"intent_response_key_target": "string",

"intent_response_key_prediction": {

"confidence": 0.6323,

"name": "greet"

}

}

]

},

"entity_evaluation": {

"property1": {

"report": {

"greet": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100,

"confused_with": {

"chitchat": 3,

"nlu_fallback": 5

}

},

"micro avg": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100

},

"macro avg": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100

},

"weightedq avg": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100

}

},

"accuracy": 0.19047619047619047,

"f1_score": 0.06095238095238095,

"precision": 0.036281179138321996,

"predictions": [

{

"intent": "greet",

"predicted": "greet",

"text": "hey",

"confidence": 0.9973567

}

],

"errors": [

{

"text": "are you alright?",

"intent_response_key_target": "string",

"intent_response_key_prediction": {

"confidence": 0.6323,

"name": "greet"

}

}

]

},

"property2": {

"report": {

"greet": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100,

"confused_with": {

"chitchat": 3,

"nlu_fallback": 5

}

},

"micro avg": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100

},

"macro avg": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100

},

"weightedq avg": {

"precision": 0.123,

"recall": 0.456,

"f1-score": 0.12,

"support": 100

}

},

"accuracy": 0.19047619047619047,

"f1_score": 0.06095238095238095,

"precision": 0.036281179138321996,

"predictions": [

{

"intent": "greet",

"predicted": "greet",

"text": "hey",

"confidence": 0.9973567

}

],

"errors": [

{

"text": "are you alright?",

"intent_response_key_target": "string",

"intent_response_key_prediction": {

"confidence": 0.6323,

"name": "greet"

}

}

]

}

}

}`

#### [](#tag/Model/operation/predictModelAction)Predict an action on a temporary state

Predicts the next action on the tracker state as it is posted to this endpoint. Rasa will create a temporary tracker from the provided events and will use it to predict an action. No messages will be sent and no action will be run.

###### Authorizations:

*TokenAuth\*\*JWT*

###### query Parameters

| | |

| --------------- | ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------ |

| include\_events | stringDefault:"AFTER\_RESTART"Enum: "ALL" "APPLIED" "AFTER\_RESTART" "NONE"Example:include\_events=AFTER\_RESTARTSpecify which events of the tracker the response should contain.-`ALL` - every logged event.

-`APPLIED` - only events that contribute to the trackers state. This excludes reverted utterances and actions that got undone.

-`AFTER_RESTART` - all events since the last `restarted` event. This includes utterances that got reverted and actions that got undone.

-`NONE` - no events. |

###### Request Body schema: application/jsonrequired

Array

Any of

UserEventBotEventSessionStartedEventActionEventSlotEventResetSlotsEventRestartEventReminderEventCancelReminderEventPauseEventResumeEventFollowupEventExportEventUndoEventRewindEventAgentEventEntitiesAddedEventUserFeaturizationEventActionExecutionRejectedEventFormValidationEventLoopInterruptedEventFormEventActiveLoopEvent

| | |

| -------------- | -------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| eventrequired | stringValue: "user"Event name |

| timestamp | integerTime of application |

| metadata | object |

| text | string or nullText of user message. |

| input\_channel | string or null |

| message\_id | string or null |

| parse\_data | object (ParseResult)NLU parser information. If set, message will not be passed through NLU, but instead this parsing information will be used. |

##### Responses

**200**

Success

**400**

Bad Request

**401**

User is not authenticated.

**403**

User has insufficient permission.

**409**

The request conflicts with the currently loaded model.

**500**

An unexpected error occurred.

post/model/predict

Local development server

http://localhost:5005/model/predict

##### Request samples

* Payload

Content type

application/json

Copy

Expand all Collapse all

`[

{

"event": "user",

"timestamp": null,

"metadata": {

"arbitrary_metadata_key": "some string",

"more_metadata": 1

},

"text": "string",

"input_channel": "string",

"message_id": "string",

"parse_data": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

}

}

]`

##### Response samples

* 200

* 400

* 401

* 403

* 409

* 500

Content type

application/json

Copy

Expand all Collapse all

`{

"scores": [

{

"action": "utter_greet",

"score": 1

}

],

"policy": "policy_2_TEDPolicy",

"confidence": 0.057,

"tracker": {

"sender_id": "default",

"slots": [

{

"slot_name": "slot_value"

}

],

"latest_message": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

},

"latest_event_time": 1537645578.314389,

"followup_action": "string",

"paused": false,

"stack": [

{

"frame_id": "8UJPHH5C",

"flow_id": "transfer_money",

"step_id": "START",

"frame_type": "regular",

"type": "flow"

}

],

"events": [

{

"event": "user",

"timestamp": null,

"metadata": {

"arbitrary_metadata_key": "some string",

"more_metadata": 1

},

"text": "string",

"input_channel": "string",

"message_id": "string",

"parse_data": {

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"intent_ranking": [

{

"confidence": 0.6323,

"name": "greet"

}

],

"text": "Hello!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a",

"metadata": { },

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

],

"flows_from_semantic_search": [ [

"transfer_money",

0.9035494923591614

]

],

"flows_in_prompt": [

"transfer_money"

]

}

}

],

"latest_input_channel": "rest",

"latest_action_name": "action_listen",

"latest_action": {

"action_name": "string",

"action_text": "string"

},

"active_loop": {

"name": "restaurant_form"

}

}

}`

#### [](#tag/Model/operation/parseModelMessage)Parse a message using the Rasa model

Predicts the intent and entities of the message posted to this endpoint. No messages will be stored to a conversation and no action will be run. This will just retrieve the NLU parse results.

###### Authorizations:

*TokenAuth\*\*JWT*

###### query Parameters

| | |

| --------------- | --------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| emulation\_mode | stringEnum: "WIT" "LUIS" "DIALOGFLOW"Example:emulation\_mode=LUISSpecify the emulation mode to use. Emulation mode transforms the response JSON to the format expected by the service specified as the emulation\_mode. Requests must still be sent in the regular Rasa format. |

###### Request Body schema: application/jsonrequired

| | |

| ----------- | ------------------------------------------ |

| text | stringMessage to be parsed |

| message\_id | stringOptional ID for message to be parsed |

##### Responses

**200**

Success

**400**

Bad Request

**401**

User is not authenticated.

**403**

User has insufficient permission.

**500**

An unexpected error occurred.

post/model/parse

Local development server

http://localhost:5005/model/parse

##### Request samples

* Payload

Content type

application/json

Copy

`{

"text": "Hello, I am Rasa!",

"message_id": "b2831e73-1407-4ba0-a861-0f30a42a2a5a"

}`

##### Response samples

* 200

* 400

* 401

* 403

* 500

Content type

application/json

Copy

Expand all Collapse all

`{

"entities": [

{

"start": 0,

"end": 0,

"value": "string",

"entity": "string",

"confidence": 0

}

],

"intent": {

"confidence": 0.6323,

"name": "greet"

},

"text": "Hello!",

"commands": [

{

"command": "start flow",

"flow": "transfer_money"

}

]

}`

#### [](#tag/Model/operation/replaceModel)Replace the currently loaded model

Updates the currently loaded model. First, tries to load the model from the local (note: local to Rasa server) storage system. Secondly, tries to load the model from the provided model server configuration. Last, tries to load the model from the provided remote storage.

###### Authorizations:

*TokenAuth\*\*JWT*

###### Request Body schema: application/jsonrequired

| | |

| --------------- | ---------------------------------------------------------------------------- |

| model\_file | stringPath to model file |

| model\_server | object (EndpointConfig) |

| remote\_storage | stringEnum: "aws" "gcs" "azure"Name of remote storage system |

##### Responses

**204**

Model was successfully replaced.

**400**

Bad Request

**401**

User is not authenticated.

**403**

User has insufficient permission.

**500**

An unexpected error occurred.

put/model

Local development server

http://localhost:5005/model

##### Request samples

* Payload

Content type

application/json

Copy

Expand all Collapse all

`{

"model_file": "/absolute-path-to-models-directory/models/20190512.tar.gz",

"model_server": {

"url": "string",

"params": { },

"headers": { },

"basic_auth": { },

"token": "string",

"token_name": "string",

"wait_time_between_pulls": 0

},

"remote_storage": "aws"

}`

##### Response samples

* 400

* 401

* 403

* 500

Content type

application/json

Copy

`{

"version": "1.0.0",

"status": "failure",

"reason": "BadRequest",

"code": 400

}`

#### [](#tag/Model/operation/unloadModel)Unload the trained model

Unloads the currently loaded trained model from the server.

###### Authorizations:

*TokenAuth\*\*JWT*

##### Responses

**204**

Model was sucessfully unloaded.

**401**

User is not authenticated.

**403**

User has insufficient permission.

delete/model

Local development server

http://localhost:5005/model

##### Response samples

* 401

* 403

Content type

application/json

Copy

`{

"version": "1.0.0",

"status": "failure",

"reason": "NotAuthenticated",

"message": "User is not authenticated to access resource.",

"code": 401

}`