Every engineering team we spoke with during this evaluation told the same story. The LLM agent demo worked. The pilot impressed leadership. Then production happened.

Agents that reasoned well in testing hallucinated under real traffic. Multi-step workflows broke when a single tool call failed. Observability was nonexistent. And nobody could explain to compliance why the agent made a specific decision.

The best AI agent framework is not the one that builds the most impressive demo. It’s the one that stays reliable when complexity, traffic, and regulatory scrutiny increase.

We evaluated 8 AI agent frameworks across orchestration architecture, governance controls, deployment flexibility, voice capability, and production readiness. Each framework was assessed on its ability to close the gap between LLM prototype and production system.

Best AI Agent Frameworks in 2026: Quick Comparison Table

How We Evaluated These AI Agent Frameworks

Our team evaluated each platform across seven weighted dimensions. We’ve analyzed aggregated user reviews from G2 and Capterra, reviewed public pricing, tested deployment workflows, and consulted with enterprise engineering teams running conversational AI in production.

We prioritized platforms that enterprise buyers in regulated industries (financial services, telco, healthcare, government) would encounter during a real evaluation cycle.

Each platform was assessed on its production-readiness, not on demo-day performance.

Our Scoring Methodology

Top 8 Best Enterprise AI Agent Frameworks in 2026

#1. Rasa: Best AI Agent Framework Overall for Enterprise

Score: 9.4/10. Highest marks for governance (10/10), deployment flexibility (10/10), and voice capability (10/10). Scored lower on community size (7/10) vs. LangChain.

Rasa is the developer platform for enterprise AI agents. It delivers the control of building in-house, the speed of buying off-the-shelf, and the governance required to operate at enterprise scale. N26, Deutsche Telekom, and Swisscom run Rasa in production across banking, telco, and customer service.

Best for engineering leads and platform architects at regulated enterprises with technical teams in-house (financial services, healthcare, telco, insurance) that need code-first control, self-hosted deployment, and production-grade orchestration across voice and digital channels.

Product Overview

The gap between an LLM demo and a production agent is governance. Most frameworks let the LLM decide what to do next. Rasa CALM structures that decision differently.

Pain 1: Agent behavior is unpredictable when it spans multiple tools and systems.

Rasa structures multi-turn agent interactions across tools, databases, and channels using guided skills for critical workflows and prompt-driven skills where flexibility is valuable.

The Orchestrator combines LLM fluency with business-rule enforcement in one system. You define the guardrails. The agent stays inside them.

Pain 2: Inconsistency across voice and digital agent channels

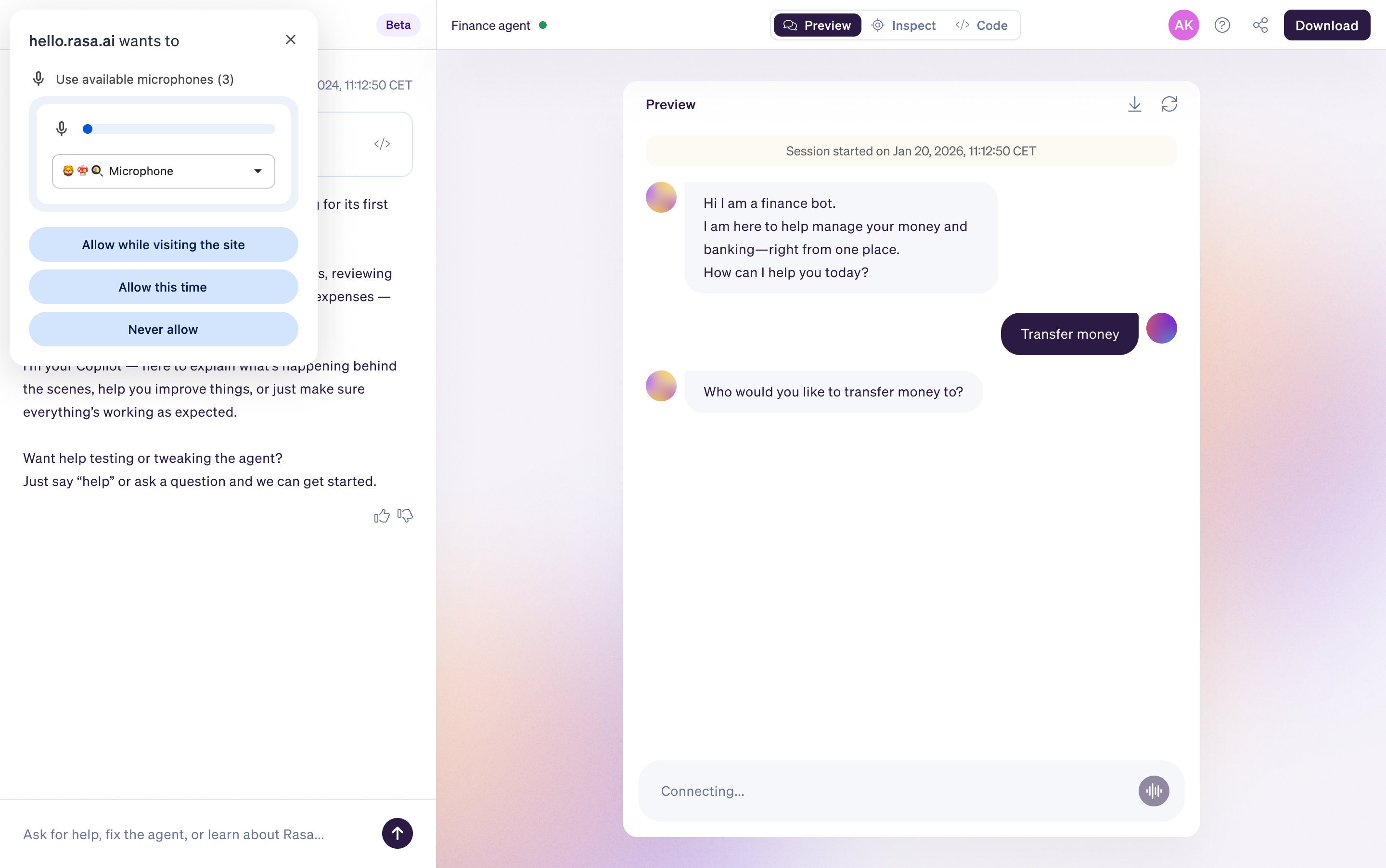

Most frameworks handle text. Rasa extends the same orchestration logic to voice channels: a voice agent that keeps pace with real callers ( interruptions, corrections, and emotion) while still acting safely.

Same policies, same integrations, same analytics. Built-in Voice Stream connectors for Twilio Media Streams, Jambonz, AudioCodes, and Genesys Cloud. Choose your own ASR and TTS providers (Deepgram, Cartesia, Azure, Rime). No separate voice stack to maintain.

Pain 3: Governance and auditability in regulated environments

Self-hosted vs cloud AI agent deployment means agent data stays in your environment rather than on autonomous cloud platforms.

Rasa does not host any customer data, systems, or applications. Every Orchestrator decision is traceable, not buried in logs. Full audit trails for compliance review. On-prem isn’t an add-on; it’s the default, designed for regulated industries.

This is how the architecture works, not a feature on a roadmap.

Pricing

Developer Edition (Free): Full access to Rasa. One bot per company, up to 1,000 external conversations/month (100 for internal agents). Community support via the Rasa Forum. Designed for individual developers exploring agent projects.

Enterprise (Custom): Premium support, dedicated CSM, advanced security features, custom onboarding. Contact Rasa for a quote.

Pricing is based on annual conversation volume, not per-user or per-seat.

Integrations and Extensibility

- MCP server integration (beta), A2A (Agent-to-Agent) protocol (beta), custom Action Server for backend system connectivity.

- CRM integrations (Salesforce, Zendesk), ITSM connectors, ERP, and contact center systems built through Action Server custom actions.

- Teams can replace or extend core engine modules: RAG pipeline, rephraser, command generator, NLU pipelines.

- This is framework-level extensibility at the engine level, not configuration menus.

- Supports any LLM provider.

Deployment and Setup

- Self-hosted in your environment from day one.

- On-premise, private cloud, or hybrid deployment.

- The fastest path to an on-prem or private cloud deployment for enterprise AI agents.

Swisscom rebuilt its customer service agent using the CALM AI agent framework and went from prototype to production in 20 weeks, doubling automation rates and cutting costs by 50%.

N26 uses Rasa for regulated banking customer service.

Tradeoffs

Rasa CALM requires a builder mindset and meaningful engineering investment. This is more agentic framework for enterprise than you need if you want a simple out-of-the-box agent live in two weeks.

Teams need Python developers and familiarity with conversational AI architecture. The tradeoff is a steeper learning curve for production-grade governance and ownership.

Support

- Enterprise tier includes premium support with a dedicated customer success manager.

- Community support via the Rasa Forum.

- Documentation at rasa.com/docs.

- Learning resources at learning.rasa.com.

Mini Case Study

Swisscom, Switzerland's leading telco, rebuilt its customer service agent Sam using Rasa's CALM framework. Prototype to production in 20 weeks. Doubled automation rates. Cut operational costs by 50%. Zero-shot intent recognition made development 1.6x faster.

→ Read the Swisscom case study

See How Rasa CALM Bridges the Demo-to-Production Gap

#2. LangChain / LangGraph: Best AI Agent Framework for Flexibility and Integration Breadth

Score: 7.6/10. Highest marks for extensibility (9/10) and community (9/10). Scored lower on governance (4/10), voice (0/10), and deployment enterprise support (5/10).

Best for engineering teams that need rapid prototyping with the broadest integration ecosystem and are willing to build governance layers themselves.

Product Overview

- LangChain is the most widely adopted open source LLM agent framework, with 25+ million downloads.

- Modular architecture for chains, agents, memory, and tool integrations.

- LangGraph adds graph-based state management with explicit nodes, edges, and persistence for multi-step reasoning.

- Supports OpenAI, Anthropic, Google, and open-source models.

- LangSmith provides observability, tracing, and evaluation.

Pricing

- LangChain/LangGraph: free and open source (MIT).

- LangSmith (observability): Developer free, Plus $39/seat/month, Enterprise custom.

Deployment and Integrations

- Self-managed deployment.

- No hosted runtime.

- LangServe exposes agents as REST APIs.

- Integrations with vector databases (Pinecone, ChromaDB), data sources, and 100+ tools.

Setup

- Hours for basic prototypes.

- Weeks to months for production-grade deployments with governance, testing, and monitoring layers.

Tradeoffs

- Gets prototypes live fast, but production governance is your responsibility.

- Complexity creeps as the agent spans more tools and steps.

- Breaking changes between major versions have burned teams.

- No built-in voice capability.

- No deterministic business logic layer.

- No self-hosted enterprise support without third-party providers.

- Abstraction layers can make debugging harder as workflows grow.

G2: 4.7/5 (37 reviews). No Capterra listing.

#3. Microsoft Agent Framework (AutoGen + Semantic Kernel): Best for Azure and .NET Ecosystem

Score: 7.2/10. Strong orchestration patterns (8/10) and Azure integration (8/10). Scored lower on GA maturity (6/10), voice (0/10), and community (5/10).

Best for enterprises deeply invested in Azure, Microsoft 365, and .NET that want a unified multi-agent framework with enterprise-grade middleware.

Product Overview

- Unifies AutoGen (research multi-agent orchestration) and Semantic Kernel (enterprise SDK) into one framework.

- Orchestration patterns: sequential, concurrent, group chat, handoff, and magentic (manager-driven task ledger).

- Supports Python and .NET. MCP and A2A protocol support.

- Azure AI Foundry Agent Service integration.

- GA targeted Q1 2026.

Pricing

- Framework: free and open source (MIT).

- Azure AI Foundry services: usage-based Azure pricing.

Deployment and Integrations

- Azure Cloud via AI Foundry.

- Self-managed deployment is possible.

- Deep integration with Microsoft 365, Dynamics 365, and Azure services.

- Elasticsearch connector available.

Setup

Weeks for Azure-native deployments. Longer for teams not already on Microsoft infrastructure.

Tradeoffs

- Strongest choice if you are already on Azure.

- AutoGen and Semantic Kernel are now in maintenance mode (bug fixes only, no new features).

- GA targeted Q1 2026, meaning enterprise teams adopt at public preview risk.

- Tight Azure coupling limits portability.

- No native voice channel connectors.

- Learning curve higher than LangChain for teams outside the Microsoft ecosystem.

No Capterra listing.

#4. CrewAI: Best AI Agent Framework for Structured Multi-Agent Collaboration

Best for teams building coordinated multi-agent framework workflows where each agent has a defined role, goal, and set of tools.

Score: 7.0/10. Strong multi-agent architecture (8/10). Scored lower on governance (4/10), voice (0/10), and enterprise deployment support (5/10).

Product Overview

- Define agents with specific roles, goals, and tools. Organize them into 'crews' that execute tasks sequentially or hierarchically.

- CrewAI Studio provides a visual editor alongside API and SDK access.

- Real-time tracing for every agent step.

- Adopted by 60% of Fortune 500 companies (claimed).

- 450+ million workflows/month processed.

Pricing

- Open-source core: free (Apache 2.0).

- Cloud: Basic free (50 executions/month), Professional $25/month (100 executions), Enterprise custom (up to 30,000 executions, SOC2, SSO, RBAC).

- Ultra tier reported at $120,000/year.

- Self-hosted OSS has no execution limits.

Deployment and Integrations

- Self-hosted via open-source.

- Cloud platform managed by CrewAI.

- Integrations with Gmail, Slack, HubSpot, Salesforce, and Notion.

- MCP support.

Setup

- Hours for basic crews using templates.

- Days for production deployments with custom tools and integrations.

Tradeoffs

- Role-based structure is intuitive.

- But execution-based pricing on managed cloud escalates fast.

- Open-source core requires self-hosting.

- No built-in voice capability.

- No deterministic business logic layer comparable to Rasa CALM.

- Enterprise plan reaches $120K/year.

- Smaller ecosystem than LangChain.

No Capterra listing.

#5. Vellum: Best AI Agent Framework for Low-Code Enterprise Agent Building

Best for teams that need both technical depth (SDK) and non-technical collaboration (visual builder, natural-language agent creation) with enterprise governance.

Score: 7.1/10. Strong evaluation tooling (8/10) and low-code builder (8/10). Scored lower on voice (0/10) and governance depth (5/10).

Product Overview

- Build agents through natural language (describe what you want), visual canvas (drag-and-drop), or code (TypeScript/Python SDK).

- Built-in evaluation framework for testing prompts, workflows, and agents before production.

- Version control, regression testing, rollback.

- RBAC and audit logs.

- SOC 2 Type II and HIPAA compliant.

Pricing

- Free tier (50 builder credits/month, 1 user).

- Pro from $25/month (5 users max).

- Business and Enterprise custom.

- VPC deployment on Enterprise.

Deployment and Integrations

- Cloud default.

- VPC and on-premise deployment on Enterprise.

- Integrates with OpenAI, Anthropic, Google, Azure OpenAI, Cohere, and other LLM providers.

Setup

- Hours for agent scaffolding via natural-language builder.

- Days for production workflows with evaluations.

Tradeoffs

- Strong evaluation and observability tooling.

- But credit-based pricing on lower tiers limits iteration speed.

- Pro plan capped at 5 users, forcing Enterprise upgrade.

- Relies on external LLM providers for inference.

- No native voice capability.

- No built-in conversational AI orchestration comparable to Rasa CALM for multi-turn dialogue.

Capterra listed with limited reviews.

#6. Gumloop: Best AI Agent Framework for No-Code Model Orchestration

Best for non-technical teams that need to orchestrate and compare LLM models without writing code.

Score: 5.8/10. Useful for model management (7/10). Scored lower on orchestration (3/10), governance (2/10), voice (0/10), and deployment (3/10).

Product Overview

- Visual no-code interface for building AI workflows.

- Compare models side by side.

- Route requests to different providers based on cost, latency, or capability.

- API support for production integration.

Pricing

- From $37/month.

- Enterprise pricing custom.

Deployment and Integrations

- Cloud-only.

- API integrations with major LLM providers.

Setup

- Same-day for basic model routing.

- Days for production workflows.

Tradeoffs

- Useful for model management and comparison, but not a full agent framework.

- No multi-agent orchestration.

- No dialogue management.

- No voice capability.

- No self-hosted deployment.

- Better suited as a model layer within a broader agent architecture.

No Capterra listing.

#7. OpenAI Swarm: Best for Multi-Agent Research and Experimentation

Best for research teams and developers experimenting with agent handoff patterns and multi-agent coordination.

Score: 4.2/10. Educational value (7/10). Explicitly experimental, not production-ready. Scored 0 on governance, deployment, voice, pricing, and support.

Product Overview

- Lightweight agent abstraction with handoff primitives from OpenAI.

- Agents transfer control to other agents mid-conversation.

- Simple Python interface.

- Designed for educational and experimental use.

Pricing

- Free and open source (MIT).

- LLM costs via OpenAI API.

Deployment and Integrations

- Self-managed.

- No hosted runtime.

- OpenAI models only by default.

Setup

- Minutes for basic examples.

- Not designed for production deployment.

Tradeoffs

- OpenAI explicitly states that Swarm is experimental and not intended for production.

- No persistence, no memory management, no observability, no governance.

- Valuable for learning multi-agent patterns.

- Not a framework for enterprise deployments.

N/A for reviews (experimental).

#8. Semantic Kernel: Best AI Agent Framework for .NET Enterprise Development

Best for .NET shops that need AI agent capability within existing enterprise applications and are willing to migrate to Microsoft Agent Framework long-term.

Score: 6.0/10. Good .NET integration (8/10). Now in maintenance mode. Scored lower on orchestration (5/10), governance (4/10), voice (0/10).

Product Overview

- Microsoft's lightweight SDK for building AI agents with .NET, Python, and Java.

- Pluggable architecture for LLM orchestration.

- Supports function calling, planning, and memory.

- Integrates with Azure OpenAI, OpenAI, and Hugging Face.

- Content moderation and telemetry are built in.

Pricing

- Free and open source (MIT).

- Azure services are usage-based.

Deployment and Integrations

- Self-managed or Azure deployment.

- Deep .NET ecosystem integration.

Setup

Hours for basic integration into existing .NET applications.

Tradeoffs

- Now in maintenance mode: bug fixes and security patches only, no new features.

- Microsoft Agent Framework is the successor.

- Teams starting new projects should evaluate the unified framework.

- Limited multi-agent patterns compared to AutoGen/CrewAI.

- No voice capability.

- .NET-first design limits appeal for Python-only teams.

No Capterra listing.

How to Choose the Best AI Agent Framework for Your Enterprise

Step 1: Define Your Governance and Deployment Model First

This is the non-negotiable filter. Does your organization have data sovereignty requirements, compliance mandates, or security policies that require agents to run in your own environment?

If yes, eliminate cloud-only managed frameworks before comparing any other capability.

Rasa deploys self-hosted from day one. LangChain and CrewAI OSS can be self-managed but lack enterprise support. Vellum offers VPC on Enterprise tier.

The first question isn’t “Which features do I need?” It’s “Do I need to own this system, or am I comfortable renting it?”

If the agent touches regulated flows, high-value transactions, identity, payments, or account changes, the work is never “done.” It evolves.

Choose a platform built for that long game of continuous improvement with clear ownership.

Step 2: Map Your Agent Complexity

Simple FAQ deflection and single-turn lookups are a solved problem. Pressure-test each framework against your most complex agent use case, not your simplest.

If the demo only shows a linear happy path, push for multi-system tasks and exception handling under failure conditions.

Step 3: Assess LLM Governance and Constraint Controls

Every framework will claim LLM capability. The real differentiator is governance: can you define what the agent is and isn’t allowed to do? Can you enforce topic constraints, action policies, and maintain full audit trails at the conversation level?

The Rasa Orchestrator combines guided skills for high-stakes workflows with prompt-driven skills where flexibility is valuable. Most other frameworks rely on prompt engineering, which is not governance.

Step 4: Evaluate Multi-Channel Deployment Architecture

Does the same agent logic, integrations, and analytics apply across voice, web chat, WhatsApp, and in-app? What happens when a user moves from chat to voice?

Rasa Voice is the only framework in this list with built-in voice channel connectors.

Step 5: Test Integration Depth Against Your Real Tech Stack

Ask for a live integration demonstration with your CRM, ITSM, or authentication system.

What happens when an integration fails mid-agent-turn? How are custom business rules defined: through a configuration UI, or at the code level?

Step 6: Run a Production Pilot on Your Most Complex Use Case

Pick one high-stakes customer or employee journey. Run it end-to-end in a pre-production environment. Track time-to-first-meaningful-action, containment rate, escalation quality, and whether the system behaves predictably across edge cases.

Time-to-first-meaningful-action isn’t the first reply. It’s the first real step forward. It’s the moment the customer feels progress, that something is actually getting handled.

Step 7: Evaluate Total Cost of Ownership and Framework Longevity

Compare beyond license or open-source cost: implementation engineering time, ongoing maintenance overhead, the cost of absorbing upstream breaking changes (LangChain has had several major version rewrites), and the cost of switching if the framework hits its architectural ceiling in 24 months.

AI Agent Framework Pricing Models and Costs in 2026

Pricing in the AI agent framework category falls into four models. Each creates different cost curves at scale.

Open source (free): LangChain, CrewAI OSS, AutoGen, OpenAI Swarm, Semantic Kernel. Real cost is engineering time: building governance, testing, monitoring, and deployment infrastructure. Hidden costs typically exceed platform license fees within 6 months.

Per-execution: CrewAI managed cloud charges per crew execution. Affordable at pilot scale, unpredictable at enterprise volume. $0.50 per additional execution above plan limits.

Credit-based: Vellum uses builder credits for agent creation. Limits iteration speed on lower tiers.

Enterprise custom: Rasa Enterprise, Vellum Enterprise, CrewAI Enterprise. Annual conversation-volume or execution-volume licensing. Contact the vendor for pricing.

Token-based pricing (LLM API costs) compounds unpredictably at scale across all frameworks. Factor this into TCO regardless of the framework licensing model.

Questions to Ask Before Choosing an Agentic AI Framework

1. Governance model

Can you define what agents can and cannot do at the action level, not just the prompt level?

2. Deployment

Self-hosted, private cloud, or cloud-only? Who controls the infrastructure?

3. LLM lock-in

Can you swap LLM providers without rewriting agent logic?

4. Voice capability

Does the framework handle voice natively, or does it require assembling a separate stack?

5. Extensibility ceiling

Can you modify core engine modules, or are you limited to what the vendor pre-built?

6. Breaking changes

What is the framework's track record on backward compatibility? How disruptive have past major versions been?

7. Total cost of production

What does year-two cost when you factor in maintenance, upgrades, monitoring, and the engineering time to keep agents reliable?

AI Agent Framework Integrations: What to Verify Before Building

Integration depth is where architectural decisions fail in production. A framework that connects to your CRM during a demo may break silently when the CRM returns an unexpected response during a live agent turn.

Critical questions: What happens when an integration fails mid-conversation? Does the agent recover, escalate, or freeze? How are custom business rules defined for each integration point? Is the integration native, API-based, or through a third-party connector?

Key integrations to verify: CRM (Salesforce, Zendesk, HubSpot), ITSM (ServiceNow, Jira), authentication systems, payment processing, telephony (Twilio, Genesys, AudioCodes), and any system your agent needs to read from or write to during a conversation.

Key Features to Consider in Your AI Agent Framework Comparison

Multi-Turn Dialogue and Task Orchestration

Agents that handle single questions are easy. Production agents manage conversations that span multiple systems, context switches, and fallback scenarios. The framework must orchestrate across these without losing state.

LLM Governance and Constraint Controls

Can you define what the agent is allowed to do and say? Rasa CALM provides patented architectural separation. Most frameworks rely on prompt engineering, which is not governance.

Multi-Agent Coordination and Handoff Protocols

Enterprise deployments need multiple agents coordinating with shared state, clean handoffs, and unified memory. CrewAI and Microsoft Agent Framework provide explicit multi-agent patterns.

Native Multi-Channel Support (Voice + Digital)

Rasa Voice is the only framework in this list with built-in voice channel connectors. Every other framework requires third-party voice integration.

Self-Hosted and On-Premises Deployment

Regulated industries need agents running in their environment. Cloud-only frameworks are disqualified for financial services, healthcare, and government.

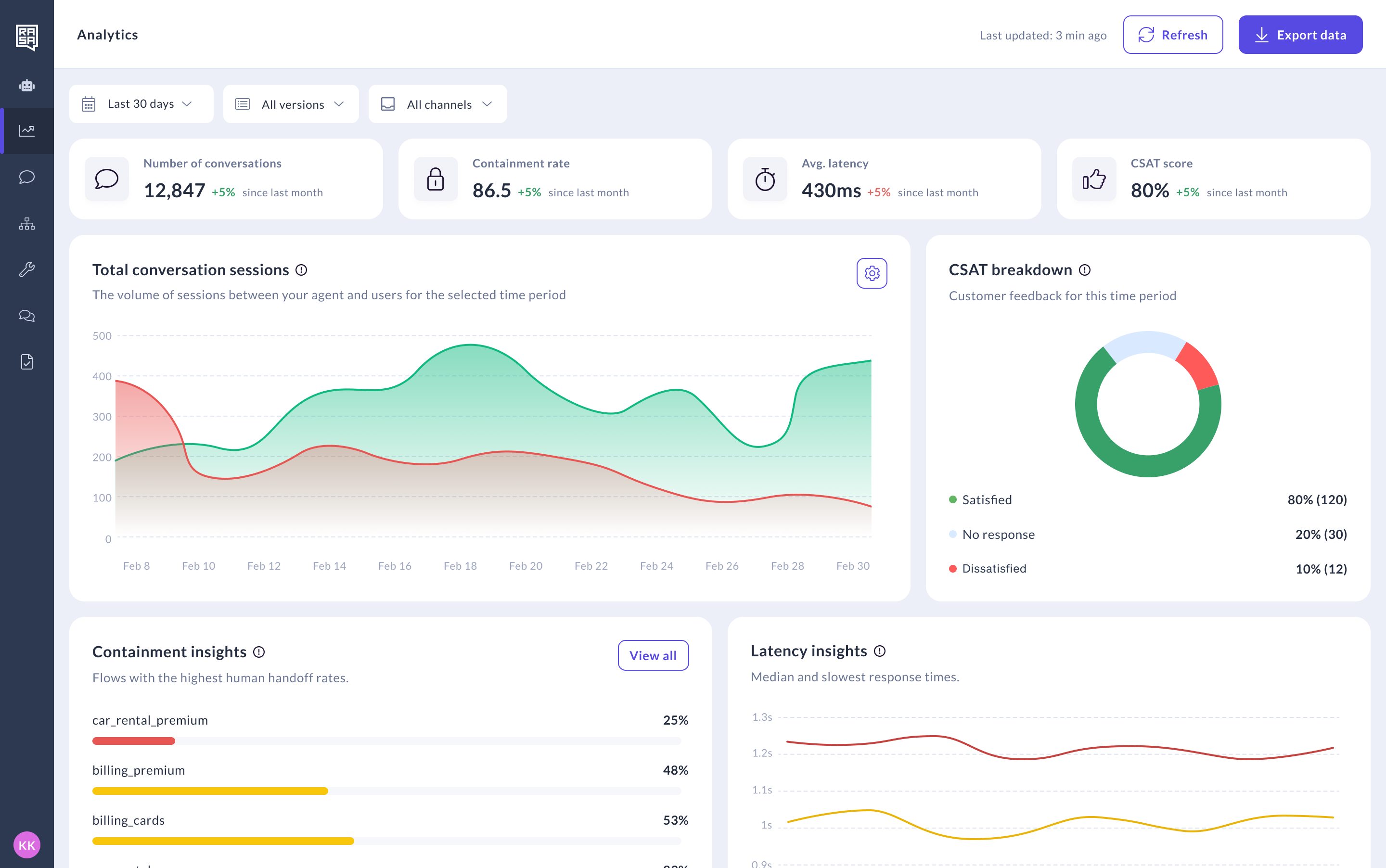

Observability, Audit Trails, and Conversation-Level Analytics

Trace every agent decision: what data it accessed, what tool it called, what action it took, and why. Required for production debugging and compliance.

CRM and Enterprise Backend Integration Depth

Pre-built connectors save time. But the real test is what happens when the integration fails. Look for frameworks that handle failure gracefully, not silently.

Code-Level Extensibility

Can you replace core modules? Modify the RAG pipeline? Write custom actions? Configuration-only frameworks hit a ceiling. Rasa provides engine-level extensibility.

Agent and Conversation Design Tooling

Visual flow builders, simulation environments, and testing tools accelerate development. Rasa Studio (beta), CrewAI Studio, and Vellum provide visual interfaces alongside code access.

What Is the Best AI Agent Framework for Regulated Industries?

Regulated industries (banking, healthcare, government) require self-hosted deployment, deterministic agent behavior, and full audit trails. Most open-source frameworks provide none of these out of the box.

Rasa CALM deploys in your environment. Its patented dialogue manager separates LLM understanding from business logic execution. Every agent action is traceable.

Rasa does not host any customer data, systems, or applications.

N26 uses Rasa for regulated banking customer service. Microsoft Agent Framework offers Azure-based governance suitable for regulated teams already on Azure.

What Is the Best AI Agent Framework for Enterprise Voice and Chat Agents?

Most agent frameworks are text-only. Voice requires real-time audio processing, speech provider integration, barge-in handling, and latency optimization.

Rasa Voice is the only framework in this evaluation with built-in voice channel connectors (Twilio Media Streams, Jambonz, AudioCodes, Genesys Cloud). The same CALM orchestration logic applies to both voice and chat. Context carries across channels.

Every other framework requires assembling a separate voice stack.

AI in Enterprise Agent Frameworks: What You Need to Know

LLM governance is the primary discussion topic in enterprise agent framework buying decisions. Every vendor claims AI capability. The differentiator is accountability.

How does the framework govern LLM behavior? Rasa CALM separates understanding (LLM) from execution (deterministic flows). Most frameworks let the LLM drive both, relying on prompt constraints that can be bypassed.

Can business teams define what agents can and cannot do? With Rasa, business rules are defined in deterministic flows, not prompts. Non-technical teams can design flows in Rasa Studio (beta) while developers own the integrations.

What audit trail exists for AI decisions? Rasa provides conversation-level tracing through deterministic flows. Every action is logged: what data was accessed, what tool was called, what response was generated.

What does the AI actually do vs. what humans still oversee? In Rasa CALM, the LLM handles intent classification and dialogue understanding. Deterministic flows handle action selection, policy enforcement, and response generation. Humans define the guardrails. The AI operates within them.

Position: AI as a structured, governed tool operating within defined business policies, not an unconstrained reasoning engine. This matches Rasa CALM's architecture and directly addresses buyer concerns about hallucination risk and regulatory exposure.

Is Rasa CALM Worth the Investment?

Three paths exist for enterprise AI agent frameworks:

Lightweight open-source library (LangChain, CrewAI OSS): Choose if you have high engineering capacity, low governance requirements, and are building rapid prototypes where breaking changes are tolerable.

Cloud-managed platform (Vellum, Gumloop): Choose if you want narrow scope, speed to deploy, and don't need self-hosted deployment or deterministic business logic.

Rasa CALM: Choose if you operate in a regulated industry, need self-hosted deployment, require deterministic governance over agent behavior, need voice + chat from one framework, and are building AI agents as a long-term production system.

Rasa CALM is for teams building AI agents as a long-term production system, not for teams that need a demo live by next Friday.

Which AI Agent Framework Is Right for Your Enterprise?

- Need production governance + ownership: Rasa CALM. Self-hosted, deterministic logic, native voice.

- Need rapid prototyping + broad integrations: LangChain/LangGraph. Largest ecosystem, fastest to first prototype.

- Need Azure-native orchestration: Microsoft Agent Framework. Deep Azure/M365 integration.

- Need multi-agent role-based collaboration: CrewAI. Structured crews with visual editor.

- Need low-code + enterprise governance: Vellum. Visual builder + SDK + evaluations.

- Need no-code model management: Gumloop. Model comparison without code.

- Need to experiment with multi-agent patterns: OpenAI Swarm. Educational, not production.

- Need .NET AI integration: Semantic Kernel (maintenance mode) or Microsoft Agent Framework.

Frequently Asked Questions

What is the best AI agent framework for enterprise?

Rasa, for teams needing self-hosted deployment, guided governance over agent behavior, and production-grade orchestration across voice and digital channels.

Rasa delivers the control of building in-house, the speed of buying off-the-shelf, and the governance required to operate at enterprise scale.

What is the best open-source AI agent framework?

LangChain for community size and integration breadth (MIT license). CrewAI for multi-agent collaboration (Apache 2.0). Rasa for enterprise conversational AI with self-hosted deployment and deterministic governance (open core).

All are open source at their core.

What is the best agentic framework for self-hosted deployment?

Rasa. Self-hosted from day one with on-premise and private cloud deployment. Agent data stays in your environment. Rasa does not host any customer data.

LangChain and CrewAI OSS can be self-managed but lack enterprise support and governance features.

Which AI agent framework gives the most control over LLM behavior?

Rasa. The Orchestrator’s patented dialogue management separates LLM understanding from action execution. Guided skills control what the agent does for high-stakes workflows. Prompt-driven skills handle exploratory conversations. You define the boundaries.

What is the best AI agent framework for developers in 2026?

LangChain for the broadest Python ecosystem and fastest prototyping.

Rasa CALM for the deepest Python-native conversational AI framework with engine-level extensibility.

CrewAI for intuitive multi-agent role-based development.

What are the best AI agent frameworks for multi-agent systems?

CrewAI for role-based agent collaboration with hierarchical task execution.

Microsoft Agent Framework for multi-agent orchestration patterns (sequential, concurrent, group chat, handoff).

Rasa for multi-agent orchestration with shared state across voice and chat channels.

What's the best AI agent framework for banking and financial services?

Rasa CALM is the best of the AI frameworks for financial services and banking. Self-hosted deployment keeps data in your environment. Deterministic business logic prevents hallucinations. Full audit trails for compliance.

N26 uses Rasa for regulated banking customer service.

How to evaluate AI agent frameworks for enterprise?

Test against your most complex agent use case, not your simplest. Verify deployment model, LLM governance, integration failure handling, voice capability, and total cost of ownership at production scale.

A demo on happy-path scenarios proves nothing.

Can one AI agent framework handle both voice and chat agents?

Rasa is the only framework in this evaluation with built-in voice channel connectors (Twilio, Jambonz, AudioCodes, Genesys) and cross-channel context continuity.

Every other framework requires third-party voice integration.

What is the best no-code AI agent builder?

Vellum for enterprise teams needing governance alongside no-code building. Gumloop for model orchestration without code. For enterprise-scale production, no-code alone is rarely sufficient.

Look for platforms combining no-code rapid iteration with code-level depth.

What is the best Python AI agent framework?

LangChain for breadth (largest Python AI framework community). Rasa CALM for depth (engine-level extensibility across dialogue management, NLU, RAG). CrewAI for multi-agent Python orchestration.

All three are Python-native.

Is there a free AI agent framework?

Yes. Rasa Developer Edition (free, 1,000 conversations/month, one bot). LangChain (MIT, fully free). CrewAI OSS (Apache 2.0, free, no execution limits). AutoGen (MIT, free). OpenAI Swarm (MIT, free, experimental). Semantic Kernel (MIT, free).

LLM API costs apply across all frameworks.

How much technical skill is needed to deploy enterprise AI agents?

All production-grade frameworks require Python developers and familiarity with LLM concepts.

Rasa and LangChain require meaningful engineering investment.

Vellum and CrewAI Studio lower the barrier for non-technical team members with visual builders.

No-code is viable for prototyping but insufficient for production enterprise deployments.

.png)