Rasa Pro 3.15 addresses three critical challenges teams face when deploying LLM-powered agents: visibility into model behavior, voice channel capabilities, and shipping with confidence.

LLM Observability with Langfuse

Without runtime observability of LLM inputs and outputs, teams struggle to understand how their conversational agents perform. You can't assess runtime metrics like latency and cost, and evaluating LLM outputs for specific tasks (such as command generation) becomes guesswork.

Rasa’s direct integration with Langfuse solves this. Every LLM call is automatically traced, recording inputs, outputs, and critical performance indicators. These traces enable you to run evaluations on production data, giving you the visibility needed to measure and improve LLM performance in real-world conditions. You can now assess how well your models handle actual user interactions, identify optimization opportunities, and make data-driven decisions about your LLM infrastructure.

Voice Channels Get First-Class Treatment

- DTMF support means your agent can now handle phone keypad input natively. You can use it to help navigate (press 1 for sales) or to type in details such as account numbers or date of birth. Especially when voice recognition fails, or users are in noisy environments. They can fall back to pressing buttons without leaving the conversation flow. This provides flexibility to support users who prefer buttons over voice input while maintaining a consistent experience.

- Streaming generated responses from enterprise search or the rephraser in voice channels eliminates the awkward silence between user input and TTS output. The audio pipeline starts preparing as tokens arrive, not after the complete response is generated. Your customers experience lower perceived latency, directly translating to better satisfaction scores on voice calls.

Prevent Regressions in Generative Components with E2E Testing

E2E testing now evaluates responses from custom actions using grounding and relevance assertions. Custom actions that dispatch generative responses can be tested against ground truth with configurable thresholds, which means you can ship generative features without fear of regressions. You're no longer manually reviewing generated content before releases, and you catch issues before your users do.

Faster Testing Iteration

When a test suite breaks, you previously had to re-run everything to verify your fix. The new failed test export solves this by allowing you to isolate and re-run only the tests that failed.

Export failed tests during your initial run:

rasa test e2e -f failed_tests.yml

After fixing the issue, re-run only the failures:

rasa test e2e failed_tests.yml

Your QA cycles shrink because you're iterating on only the failures without waiting through hundreds of passing tests.

Rasa Studio updates: CMS and Review Upgrades

Rasa Studio, Rasa’s user interface, gets two workflow improvements that reduce friction for non-technical team members managing production agents.

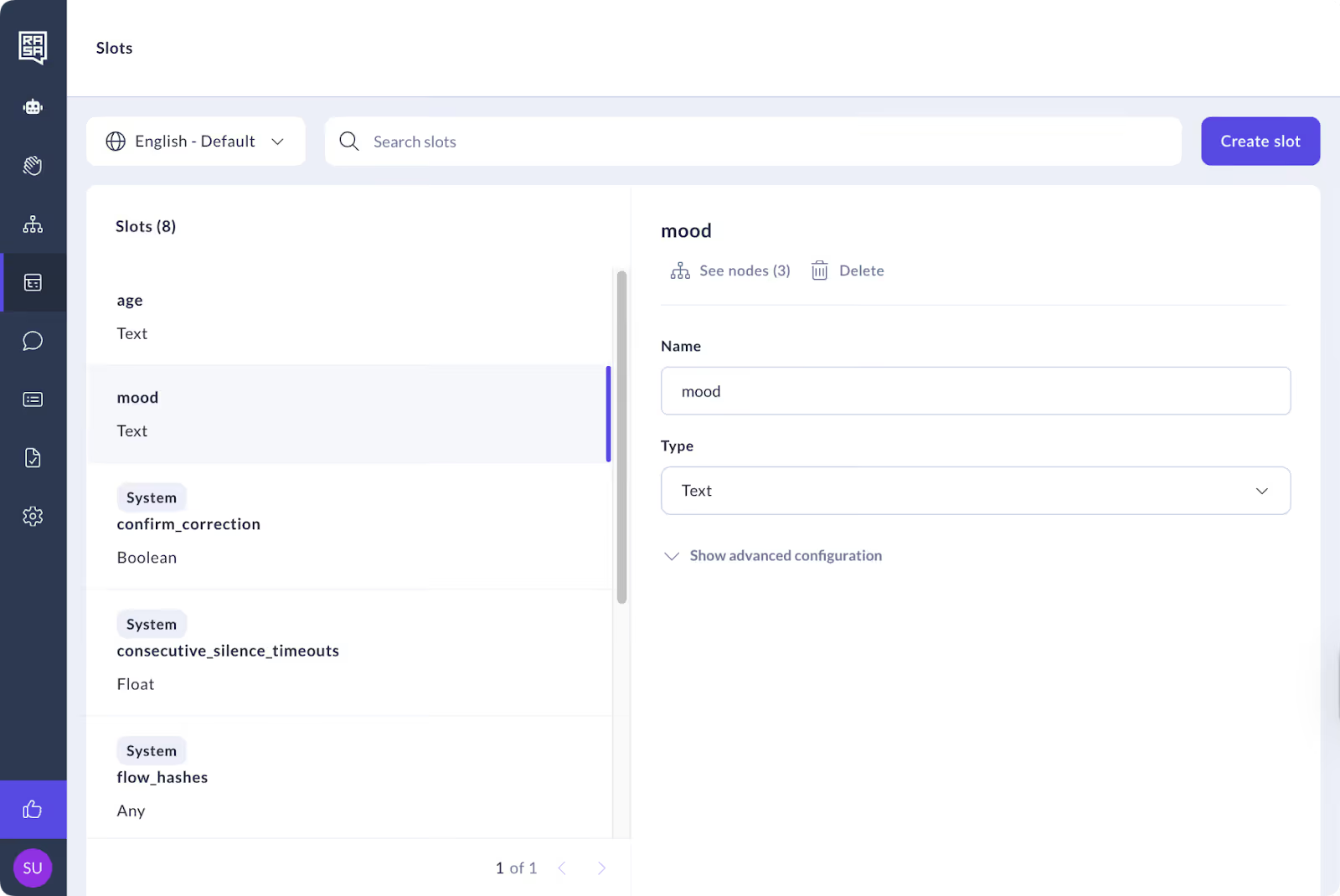

Centralized Slot Management

Slots now have their own slots CMS section accessible from the navigation menu. Content managers can create, edit, and delete slots. The "See nodes" button shows exactly where each slot is referenced across your flows, which eliminates the guesswork when you need to understand slot usage or safely deprecate old slots. There is also a corresponding button and link CMS for content managers.

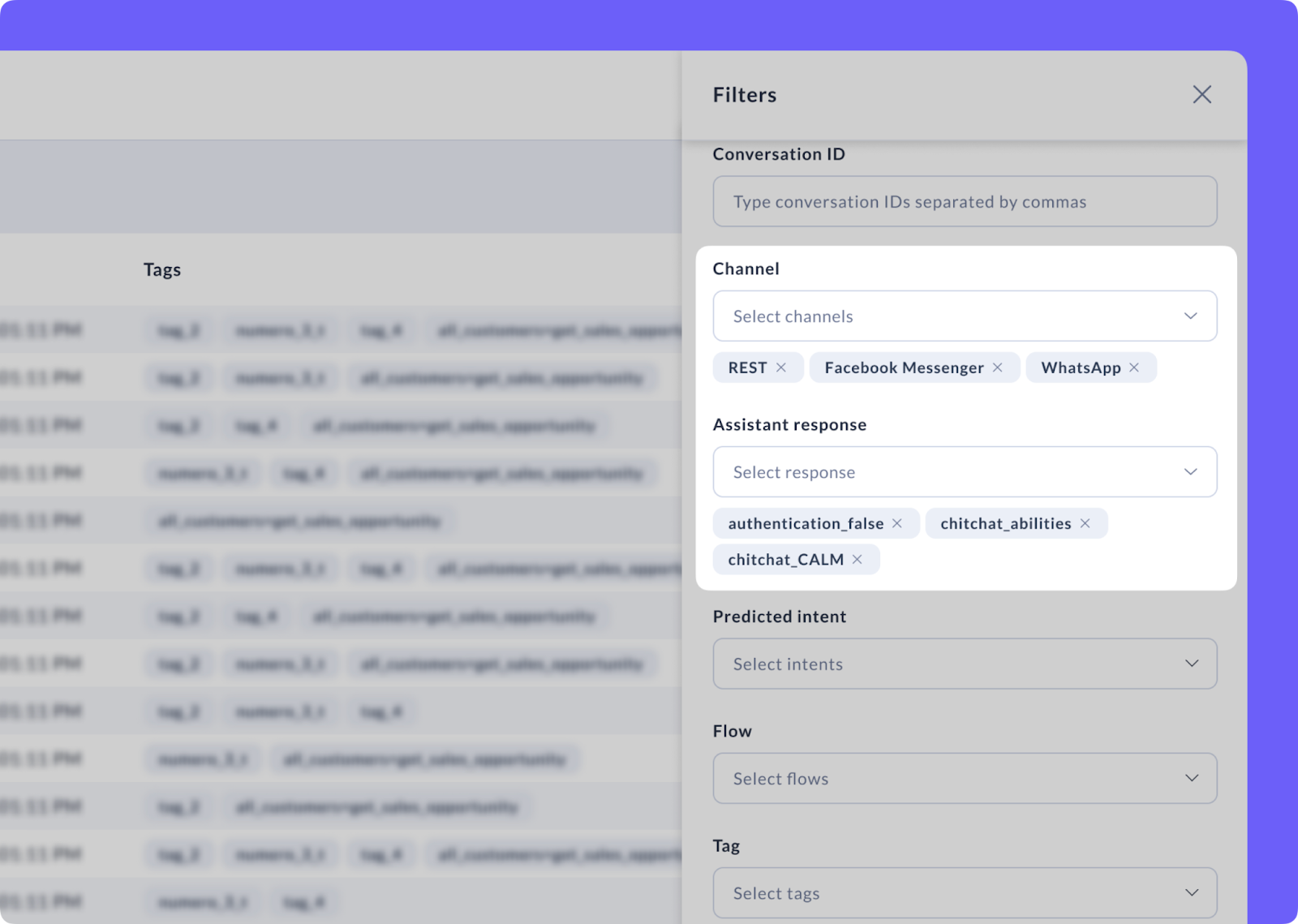

Smarter Conversation Review

Conversation Review now filters by channel and agent response. When you're debugging issues specific to voice channels or tracking down a problematic part of a conversation, you can narrow your search further instead of scrolling through thousands of unrelated conversations. This turns conversation analysis from a research project into a quick lookup.

User Experience Tweaks That Make a Difference

- Default DateTime awareness means LLMs understand concepts like "tomorrow" or even something like "quarter past 9, two days after yesterday" without requiring custom prompt engineering. Check out the new system prompt template defaults to see how it’s implemented.

- The clarification pattern now gives teams the option to limit the number of options presented, thereby avoiding giving users too many choices.

With these editions, your team stops writing workaround code, and your agents feel more polished out of the box.

What This Means for Your Roadmap

- If you're evaluating production readiness, observability was probably on your checklist. With Langfuse, you can start checking that box.

- If voice customers often communicate with buttons or need to type in lots of numbers, native DTMF opens up new interaction modes.

- If generative testing felt too manual or brittle, you now have automation.

- If slots were hard to find and update, the slot management section of Rasa Studio should make them much easier to find, access, and edit.

Read the full changelog here.

Want to try Rasa today? Check out the Rasa Playground.

Questions? Comments? Get in touch with our team or join the community at forum.rasa.com